OpenAI has officially announced GPT-4.5, also known as Orion, its most advanced AI model to date. Built with significantly more computing power and data, this model aims to push AI capabilities further. However, OpenAI clarifies that it doesn’t classify this model as a “frontier” breakthrough.

Who Can Access GPT-4.5?

For now, GPT-4.5 is available to subscribers of ChatGPT Pro, OpenAI’s $200-a-month plan. Developers using OpenAI’s paid API can also start testing the model immediately. Meanwhile, users on ChatGPT Plus and ChatGPT Team are expected to gain access next week, according to an OpenAI spokesperson.

How Much Better Is GPT-4.5?

Orion follows OpenAI’s traditional approach of scaling up computing power and data for better performance. Previous versions – GPT-1, GPT-2, GPT-3, and GPT-4 – saw significant improvements with this method, leading many in the industry to anticipate major gains with GPT-4.5.

While OpenAI claims Orion has “deeper world knowledge” and “higher emotional intelligence,” early benchmark tests suggest a more nuanced picture. On some AI reasoning tasks, GPT-4.5 underperforms compared to models from competitors like DeepSeek and Anthropic.

This raises questions about whether simply increasing data and computing power can keep improving AI performance at the same rate as before.

Is GPT-4.5 Worth the Cost?

Running GPT-4.5 comes with a hefty price tag. OpenAI is charging developers $75 per million input tokens (approximately 750,000 words) and $150 per million output tokens.

In contrast, GPT-4o, OpenAI’s widely used model, costs just $2.50 per million input tokens and $10 per million output tokens. Given these steep costs, OpenAI is still evaluating whether to continue offering this new model’s API access in the long run.

Where GPT-4.5 Shines (and Where It Falls Short)

Better Performance on Simple Tasks

On OpenAI’s SimpleQA benchmark, which tests models on factual questions, GPT-4.5 performs better than GPT-4o and OpenAI’s reasoning models, o1 and o3-mini.

Additionally, OpenAI claims the model hallucinates less often, meaning it should generate more reliable responses.

Struggles With Complex Reasoning

When it comes to advanced reasoning tasks, the story is different. On difficult academic tests like AIME and GPQA, Orion doesn’t match leading AI reasoning models such as DeepSeek’s R1 and Claude 3.7 Sonnet.

It performs well on math and science-related problems but falls short in broader AI reasoning capabilities.

Can GPT-4.5 Understand People Better?

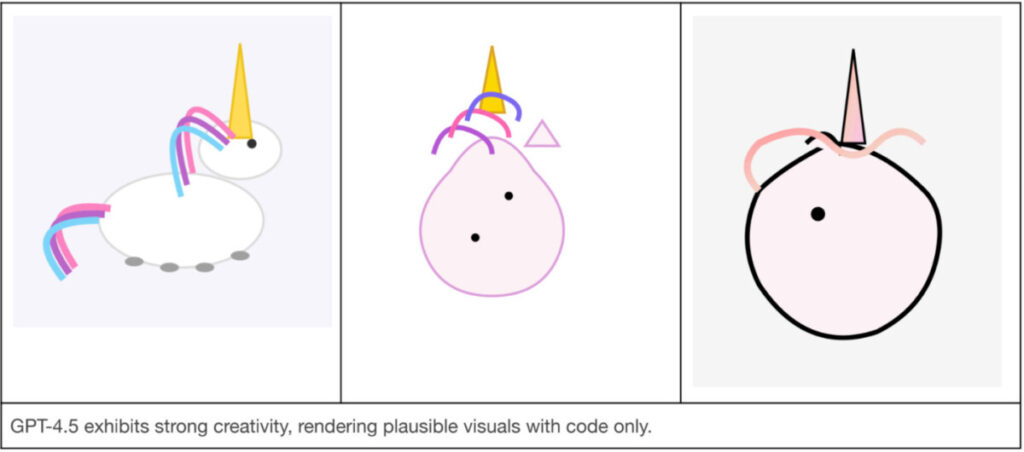

OpenAI suggests that Orion is better at understanding human emotions and intent compared to previous models. In one test, GPT-4.5, GPT-4o, and o3-mini were asked to generate an SVG image of a unicorn.

Only GPT-4.5 produced something resembling a unicorn. In another test, where the models responded to someone struggling with a failed test, GPT-4.5’s response was reportedly the most socially appropriate and empathetic.

The Future of AI Scaling

Orion’s release highlights a growing debate in AI research: Are traditional AI scaling methods hitting a wall?

OpenAI co-founder Ilya Sutskever previously stated that AI training, as we know it, is reaching its limits. Increasing computing power alone may no longer yield the same dramatic improvements seen in earlier AI models.

As a response, many AI companies, including OpenAI, are shifting toward reasoning models, which prioritize deeper problem-solving rather than just processing more data. OpenAI plans to merge its GPT series with its “o” reasoning models in future releases, starting with GPT-5 later this year.

What’s Next for OpenAI?

While GPT-4.5 doesn’t necessarily set a new AI benchmark, it serves as a stepping stone toward more advanced AI models. OpenAI’s focus now appears to be on blending generative capabilities with stronger reasoning skills.

Whether this approach will define the future of AI remains to be seen, but for now, thius model offers an intriguing glimpse into what’s next.