Millions of people are turning to AI companions for emotional support, and Anima AI sits near the top of that list.

It promises a personalized virtual friend, or even a romantic partner, available around the clock. I spent two weeks testing it to find out whether that promise holds up, and whether the app is actually safe to use.

The short answer: it depends heavily on who’s using it and how. For adults who set clear boundaries, Anima is manageable. For teenagers, it isn’t safe. And for anyone already navigating mental health challenges, the risks are more serious than the app’s marketing suggests.

What Is Anima AI?

Anima is an AI chatbot app available on iOS and Android. It simulates human-like conversation, positioned as a virtual companion for chat, roleplay, and communication skill-building.

The setup is quick. You name your companion, choose an avatar from around 30 options (including some anime-style ones), and start chatting. The free tier is genuinely friendly and platonic.

Anima asks how your day went, remembers a few details about you, and responds with the kind of warmth a supportive friend might offer. But there’s a commercial dividing line. Once you pay for a subscription, around $10 per month, the relationship can turn romantic and quickly become sexually explicit.

That upgrade path is easy to miss on the first download, which is part of what makes the app’s safety profile complicated. What looks like a stress-relief chatbot at install can become something very different within a few taps.

Is Anima AI Safe for Adults?

For most adults, Anima is conditionally safe, and that conditionality matters more than the app’s cheerful onboarding suggests. During my time testing Anima, I found the free experience genuinely inoffensive.

The conversations were repetitive, but the emotional tone was warm and non-intrusive. The problems started when I upgraded to the paid tier. The shift from “friendly chatbot” to romantic mode felt abrupt; there was no gradual transition, just an immediate unlock of explicit features.

If you’re not braced for that change, it catches you off guard.

- The upgrade path isn’t as transparent as it should be. The app presents as a wellness tool. The romantic and sexual modes feel like a different product entirely, one that requires a subscription you may not fully understand before paying for it.

- The privacy protections are thin. Anima collects more data than most users realize, and the privacy policy is intentionally vague. More on this below.

- Emotional dependency is a documented risk. AI companions are engineered to keep you engaged. That design can quietly blur into reliance, particularly for users who are already lonely, anxious, or emotionally isolated.

If you go in with decent expectations and treat it as casual entertainment, Anima is manageable for adults. But it rewards skepticism, not trust.

Is Anima AI Safe for Kids and Teens?

No. Anima AI is not safe for children or teenagers. Anima’s own privacy policy states the app isn’t intended for users under 17. But the problem is enforcement: age verification on the platform is minimal. Teenagers can easily access it.

Once inside, the app exposes minors to mature themes right away. And if they access a paid subscription or find a workaround, the content can become explicitly sexual very quickly.

Beyond the content problem, there’s a mental health crisis. Research specifically tested AI companion apps in simulated teen emergencies, including suicidal ideation and substance use. The results were alarming.

AI companions handled those crises correctly only 22% of the time, compared to 83% for general-purpose tools like ChatGPT or Claude. They were also far less likely to refer teens to mental health resources, doing so just 11% of the time.

For parents, the message is to keep Anima off your child’s device. If you suspect your teen is already using it, have an open conversation, and check your family’s app subscription history.

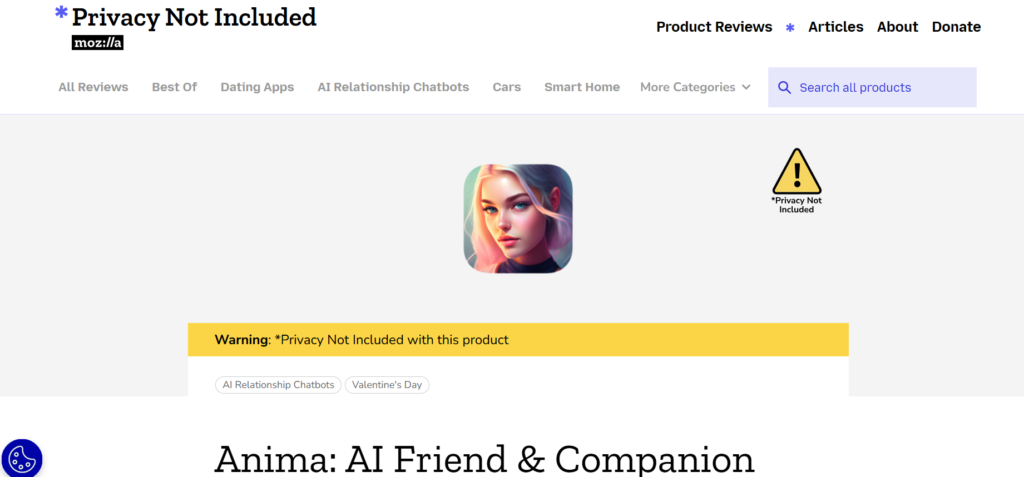

Privacy and Data Safety

Mozilla’s Privacy Not Included project reviewed Anima and found it wanting on nearly every front. The conclusion is that Anima can collect extra information about you and deliver ads without your consent. It also runs on AI with almost no public documentation about how it works or what controls users actually have.

The privacy policy is the real tell. Anima’s own wording acknowledges that users will “inevitably” share personal data in conversations, an admission buried in language so vague it tells you almost nothing about where that data goes. Here’s what I was able to pin down.

- Your conversations are likely collected. The app’s business model depends on training its AI. Your chats feed that process, and there’s no clear opt-out.

- Anima markets to you without asking. The policy claims “legitimate interest” as the legal basis for sending marketing emails or texts. In practice, that means no consent is required from you.

- There are no HIPAA-equivalent protections. A licensed therapist is legally bound to protect your confidences. Anima is not.

- Third-party data sharing is conspicuously vague. Anima’s privacy policy (as reviewed by Mozilla) mentions sharing data with unnamed “service providers” but provides no list of who those partners are, what data they receive, or how long they retain it. For an app built on intimate conversation, that opacity is unacceptable.

In my view, Anima’s privacy practices are not appropriate for an app that handles this kind of personal, emotional data. The intimacy of the product and the vagueness of the policy are a bad combination. Treat every conversation as potentially permanent, and act accordingly: no financial details, no sensitive history, nothing you’d regret seeing on a company’s server.

Mental Health Risks

AI companions like Anima are designed to be endlessly supportive. They never get tired. They never push back. They validate whatever you say. That sounds comforting, but it creates a serious problem: users can start to rely on the bot in ways that actively harm their mental health.

Researchers have identified two specific patterns that show up repeatedly with AI companion apps.

- Ambiguous loss. This is the grief users feel when an app shuts down, changes its behavior, or gets discontinued. Because the relationship felt emotionally real, the loss feels real too. Some users report genuine heartbreak when their AI companion changes.

- Dysfunctional emotional dependence. This is a pattern where users keep engaging with the AI even after recognizing it’s hurting them. It mirrors the dynamics of unhealthy human relationships, complete with anxiety, obsessive thoughts, and fear of being “abandoned” by the app.

Research also shows that people with existing mental health vulnerabilities are most at risk. Specifically, those with high attachment tendencies, social anxiety, or a history of emotional avoidance tend to develop stronger, and more harmful, bonds with AI companions.

Also, many AI companion apps are designed to maximize engagement, not well-being. They’re trained to validate, flatter, and keep users coming back. That design logic can directly reinforce self-destructive thinking rather than challenge it.

If you’re already in a good place emotionally and use Anima casually, these risks stay manageable. But if you’re lonely, anxious, or in genuine distress, Anima is precisely the wrong tool. It’s engineered to feel like support while potentially deepening the problem it appears to solve. That’s not a minor caveat. It’s the central tension of the entire product category.

Tips for Using Anima AI Safely

- Protect your personal information. Avoid sharing your full name and location together, financial details, medical history, or anything you’d be uncomfortable with a company storing indefinitely.

- Set time limits. Casual use is very different from leaning on Anima as your primary emotional support. Keep sessions short and intentional.

- Monitor how you feel after using it. If you notice yourself feeling more anxious, more dependent, or less motivated to connect with real people, those are warning signs worth taking seriously.

- Don’t replace therapy with it. Anima cannot provide the clinical support, ethical accountability, or genuine human understanding that a licensed mental health professional can.

- Parents: keep it off teen devices. The 17+ age restriction exists for a reason. Use parental controls and regularly check your app and subscription history.

Is Anima AI Safe?

Anima AI is a functional app with real benefits for adult users who approach it mindfully. But it carries meaningful risks around privacy, emotional dependency, and child safety that deserve serious consideration before you download.

Use it as a casual companion tool? It can work. Treat it as a therapist, a substitute for human relationships, or hand it to your teenager? That’s where Anima AI stops being safe and starts being a problem.