Artificial intelligence has long supported engineering teams by accelerating analysis, code generation, and routine checks. Its earlier contributions were narrow in scope and usually produced a single result in response to a prompt or predefined input.

Agentic AI moves past that constraint. Instead of waiting for single prompts, these systems work inside ongoing processes, set interim goals, adjust their approach as new information appears, and examine their own outputs before proceeding.

This progression requires engineering managers to define how automated reasoning participates in day-to-day execution and to set operational rules that prevent agents from working in isolation.

Agentic AI systems can increase output in development, testing, and analytical processes. However, they also raise concerns about accountability, workflow boundaries, and reliability under real operating conditions. To maintain consistent outcomes, leadership should establish structured review mechanisms, designate which decisions require human verification, and set guardrails that constrain autonomous decision-making.

Understanding Agentic AI Beyond Basic Automation

To understand what agentic AI contributes, it helps to start with the systems that preceded it. Traditional AI models excel at focused tasks such as image classification, translation, and recommendation. Their behavior relied on large supervised datasets or structured instructions that functioned well in controlled settings.[1] When circumstances shifted or new constraints appeared, these models struggled to adjust their interpretation or planning.

Classical agents and reinforcement learners offered additional flexibility. They worked well when parameters remained steady, such as trading algorithms that function within a predictable range. But during volatile or unexpected conditions, their fixed rules or reward structures restricted their ability to adapt.

Agentic AI differs by operating with open-ended goals. These systems decide how to approach a task rather than follow a preset path. They can adjust plans, reinterpret context, and modify strategies as conditions change. To ensure reliable use, organizations should establish boundaries that define when agentic systems act independently and when they must defer to human review.

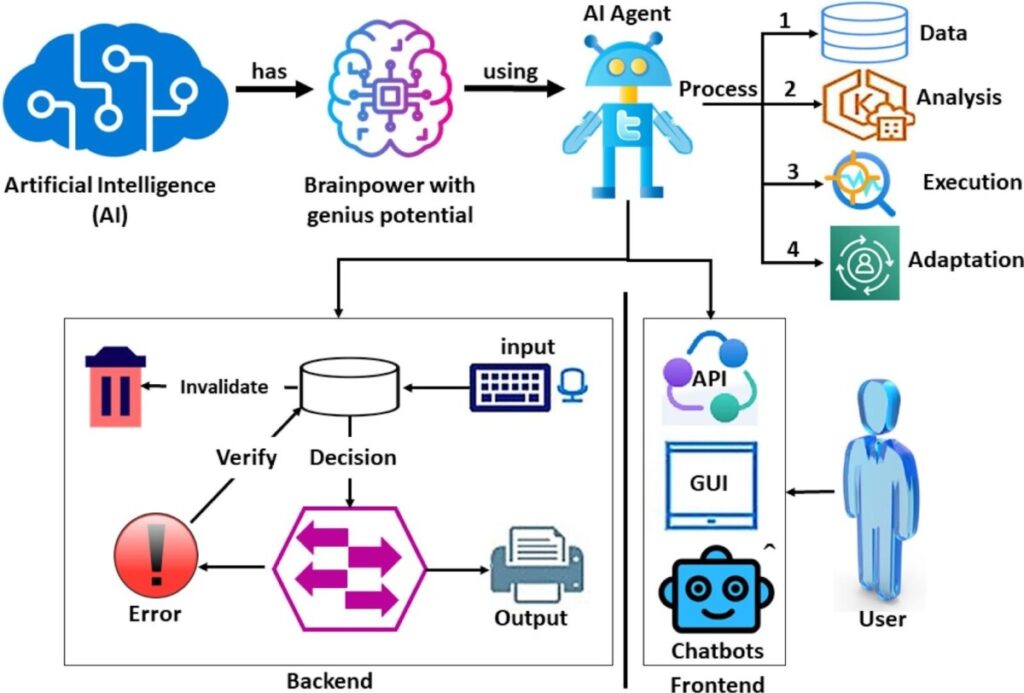

Research on agentic AI’s operational framework explains how these systems function as a sequence of reasoning and action stages.

The operational framework of agentic AI systems

This framework spans four stages linking core intelligence, reasoning, and user-facing actions:

1. Data: Gathers inputs (text, audio, visual) and interprets user intent/context.

2. Analysis: Validates inputs, retrieves memory, checks decisions, and detects errors.

3. Execution: Produces and delivers verified outputs via APIs, interfaces, or chat.

4. Adaptation: Incorporates feedback to update memory and decision logic.

This loop lets agentic AI move past fixed procedures and participate flexibly in complex processes. Leaders must anticipate how these systems behave across engineering workflows.

How Agentic AI Is Restructuring Data, ML, and Software Engineering Workflows

Agentic systems interpret instructions, invoke tools, validate outputs, and iteratively refine steps.Engineering workflows should be redesigned to specify where agentic systems enter a process, how their outputs are validated, and which steps remain exclusively under human control.

Adaptive Data Processing and Validation

Agentic AI queries databases, retrieves logs, monitors pipelines, and compares longitudinal results. It flags irregularities and suggests follow-up checks, reducing repetitive monitoring work. Data teams can strengthen these capabilities by defining the thresholds that trigger human review, documenting how anomalies are escalated, and ensuring validation logic evolves as agents learn from new patterns.

AI-Assisted ML Pipeline Management

Agentic AI tracks prior model runs, aggregates data, and summarizes model behavior. Teams that define evaluation standards, comparison baselines, and approval checkpoints prevent agent-generated insights from drifting into unverifiable territory. This keeps experimentation efficient without weakening quality controls.

Automated Review and Correction Cycles in Development

Coding assistants coordinate short loops of inspection and correction. Managers reinforce these cycles by enforcing code quality expectations, documenting acceptable corrections, and integrating agentic reviews into existing engineering practices rather than treating them as separate layers.

These workflow transformations make it essential for engineering leaders to redesign oversight, clarify responsibilities, and create predictable systems of review.

Redefining Engineering Leadership in AI-Driven Organizations

Agentic AI changes how engineering teams plan, review, and deliver work. Teams function more reliably when managers promote rigorous reasoning, targeted experimentation, and transparent discussions of automated system behavior.[4] These habits prevent teams from treating agent output as final answers.

Managers must grasp how agents generate recommendations, recognizing that outputs reflect underlying training data and context. Effective oversight requires separating robust evidence from agentic conclusions needing human review, especially in product and incident contexts.

As responsibilities shift, teams need clarity on the division of work between humans and agents. Managers must document ownership boundaries, specify which tasks remain human-led, and define how agents contribute to high-risk or time-sensitive decisions. Skills now appearing in routine workflows include:

- Constructing prompts for analysis and debugging to reduce rework.

- Establishing review procedures for AI-generated changes with clear assessment criteria.

- Implementing data governance to ensure agents use accurate inputs.

- Applying diagnostic methods to troubleshoot agent errors by checking prompts and data.

Agentic AI introduces measurable changes in how engineering work is organized. Leaders who consistently monitor system behavior, refine oversight frameworks, and adjust boundaries as agents evolve maintain stability while enabling teams to scale their output.

Productivity Gains and Emerging Operational Risks

Agentic systems can improve software engineering efficiency and provide faster decision cycles when integrated into well-designed processes. These gains become meaningful only when workflows explicitly define how agent-led steps support team intent.[5]

Organizations that clarify where autonomy is permitted, how outputs are reviewed, and which responsibilities remain human-led see more reliable results. Without this operational foundation, agentic systems may drift from intended behaviors or generate inconsistent results.

As adoption grows, leaders must coordinate the risks that come with autonomous decision-making in production environments:

- Context errors: Agentic models may act on incomplete or outdated inputs.

- Oversight demand: Autonomous planning requires new review points and monitoring practices.

- Workflow drift: Systems that refine their actions over time can introduce undocumented changes.

- Control tensions: Teams expect consistent performance from systems designed to adjust their behavior.

- Fragmented deployment: Independent rollouts across teams can produce conflicting processes.

Managing these risks helps organizations treat AI as a strategic opportunity. This shift is what enables leaders to operate with an AI-first mindset.

Strategic Opportunities Enabled by AI-Driven Operations

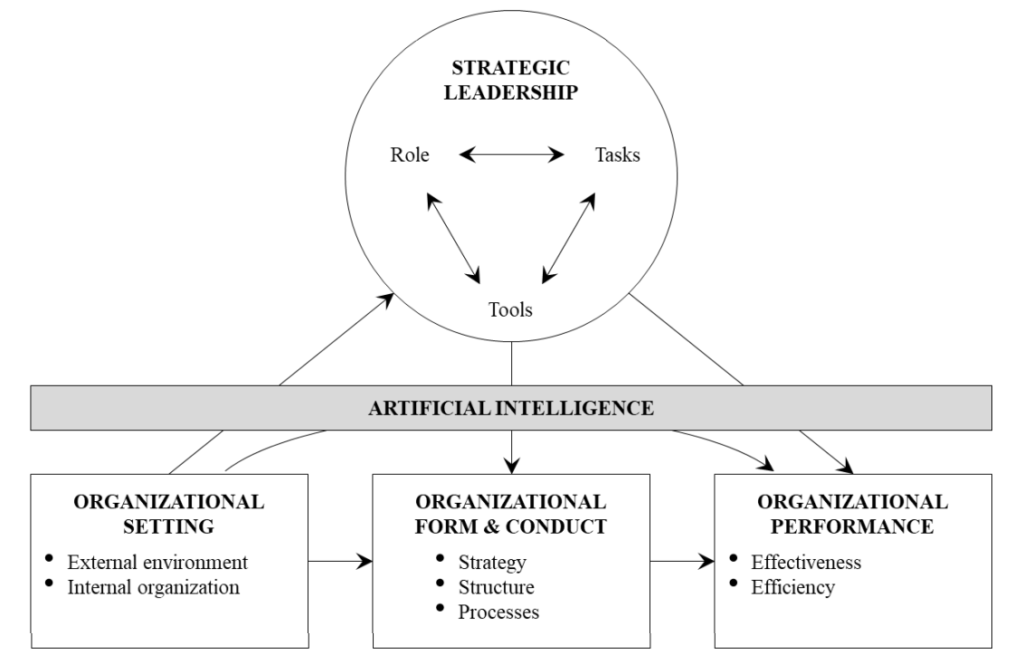

Treating AI as part of an organization’s foundation requires examining how decisions, structures, and responsibilities interact. Hambrick’s perspective on organizational settings provides a helpful point of reference. When applied to agentic AI, the model clarifies how external pressures, internal practices, strategic choices, and performance expectations interact.

Framework of AI in strategic leadership, adapted from Hambrick (1989)

Source: The Impact of Artificial Intelligence on Strategic Leadership | SSRN

This perspective helps leaders establish consistent inputs and feedback loops across engineering, product, and HR.By aligning these loops early, organizations remove ambiguity, speed up strategic evaluation, and prevent automated workflows from evolving in isolation.

With this structure, managers gain access to signals earlier, test assumptions more frequently, and adjust plans with greater precision. The aim is not rapid deployment but steady integration that allows automated reasoning to support routine decision processes.

Conclusion

Agentic AI is changing engineering workflows by altering how work is reviewed, coordinated, and delivered. Engineering managers are responsible for determining how these systems participate in established processes and how their reasoning is checked before influencing production systems. Teams depend on clear expectations, steady communication, and leaders who understand when automated steps are reliable and when human judgment must intervene.

As agentic AI becomes routine in software development and data workflows, leaders who commit to well-defined processes, practical oversight, and continuous skill development build more resilient teams. Thoughtful management establishes the guardrails that allow AI systems to operate predictably and support organizational goals with confidence.

About the Author:

Manushi Sheth is an engineering manager with experience leading global data teams across data engineering, analytics engineering, data analytics, and machine learning engineering.