When AI-generated text first became mainstream, it was easy to detect. Sentences were monotonous, certain words were repeatedly used, and the text carried on a solemn, flaccid tone, devoid of any feeling.

But that was in 2022.

In 2026, there is no doubt that AI generators have undergone significant development. AI has been trained to produce words with feeling, sentences with spontaneity, and paragraphs with anecdotes that imply a subjective origin. This has caused AI detectors, even popular ones like Quillbot and ZeroGPT, to struggle.

They sometimes report false positives while lightly edited AI text passes unflagged. Since machine text is inching closer to what a human sounds like. This begs the question, “Are AI checkers still accurate?” This article will zero in on a widely used AI checker, ZeroGPT, and ascertain its accuracy.

What Is ZeroGPT?

ZeroGPT is an online AI checker. It scans text to determine its likely origin: human or machine. It checks for patterns, word use, and the level of burstiness typical of AI text. Educators commonly use it to verify students’ work/writing. Students themselves use it to gauge their work before submissions.

While ZeroGPT is primarily an AI detection tool, it has additional features that make it an all-in-one. It has an attached AI humanizer, plagiarism checker, AI summarizer, AI paraphraser, grammar checker, translator, word counter, dictionary, and AI email helper.

Why Use ZeroGPT?

People prefer human-sounding content. It is genuine and expresses ideas using emotions, opinions, and personality elements that users find relatable. Human writing is also colored with unique perspectives and personal insights.

By contrast, AI-generated text fails to carry all these nuances. That’s why it’s routinely frowned upon, and why checkers like ZeroGPT are popularly used.

Is ZeroGPT Accurate?

1. Academic Writing (Essays)

We will start by testing ZeroGPT with academic writing. Academic writing tops the list of offenses because its highly structured and formal nature ticks off detectors’ sensitivity to perplexity.

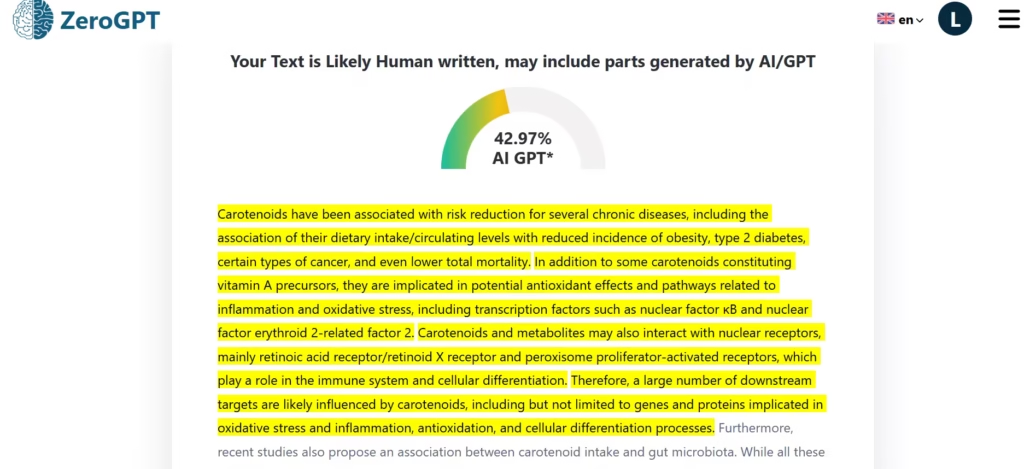

I pasted an abstract of a research paper into ZeroGPT. It identified the text as largely human-written, but some parts as AI-generated. This is unlikely given the Journal’s high standards for publication.

Score- 42.97% AI text. False positive

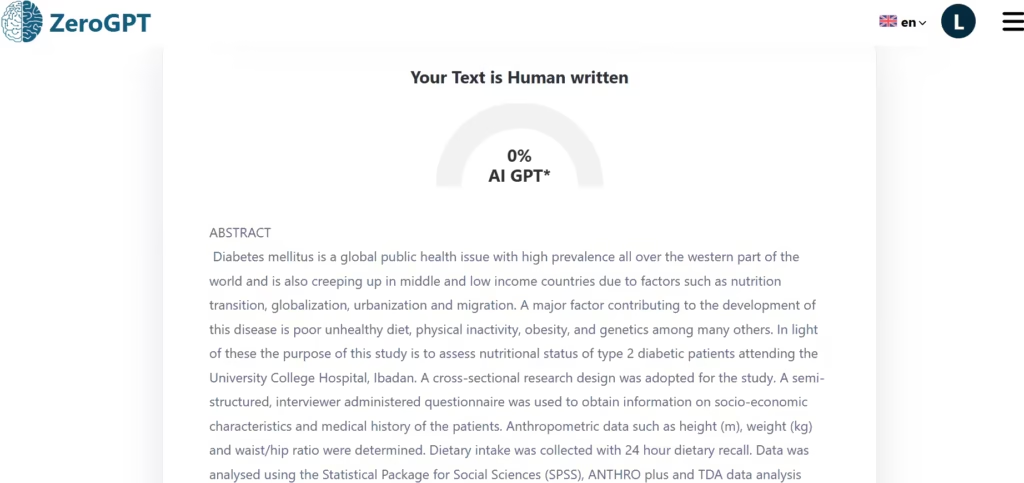

I ran this test with another academic research abstract (I wrote it and can validate as human-written). Rightly so, ZeroGPT identified it as completely human-written.

Score- 0% AI text. True positive

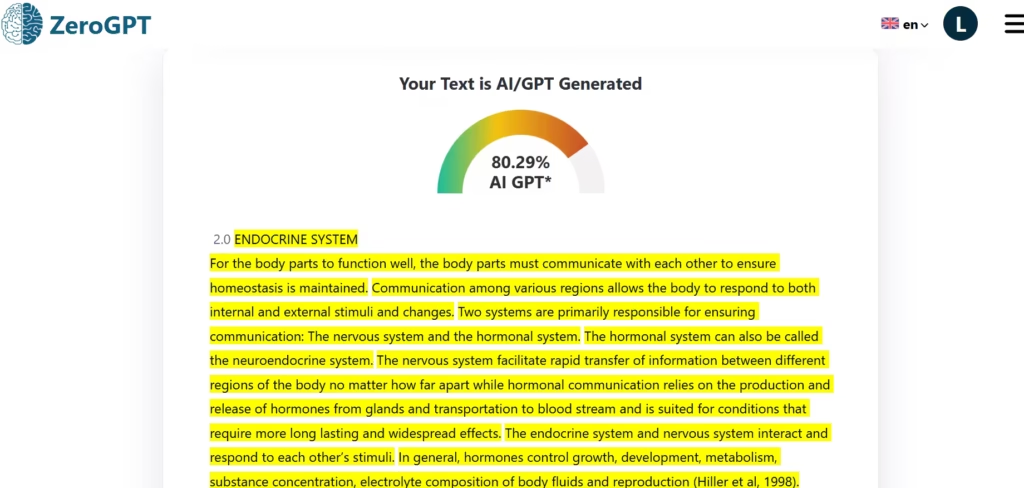

Given the skewed results so far, I decided to run another test using another of my human-written samples. But this time, I pasted a longer piece of text.

ZeroGPT flagged my human-written sample as largely AI-generated.

Score- 80.29%. False positive.

As expected, AI checkers like ZeroGPT often misidentify strictly structured texts, such as academic writing, as being of AI origin.

2. Blogs/Articles

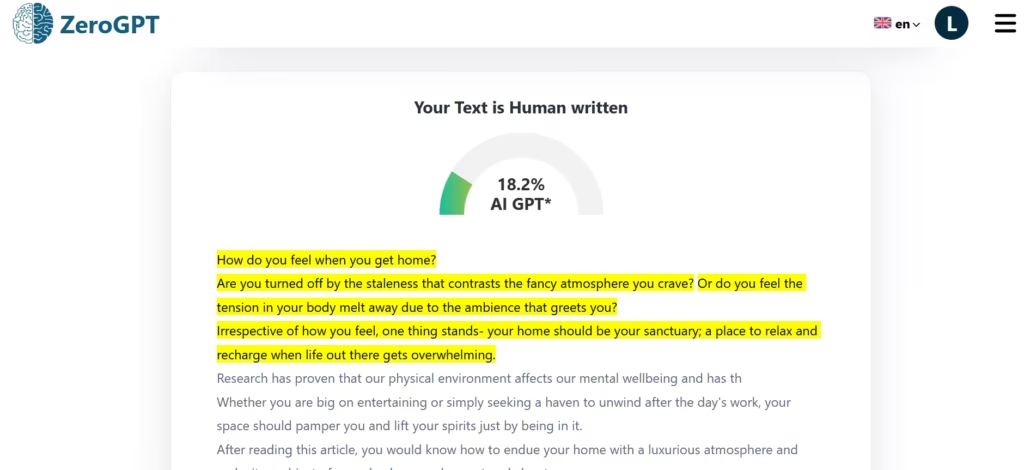

For this, I pasted the introduction of an article I wrote years ago, before AI chatbots became publicly available.

Score- 18.2%. Another false positive.

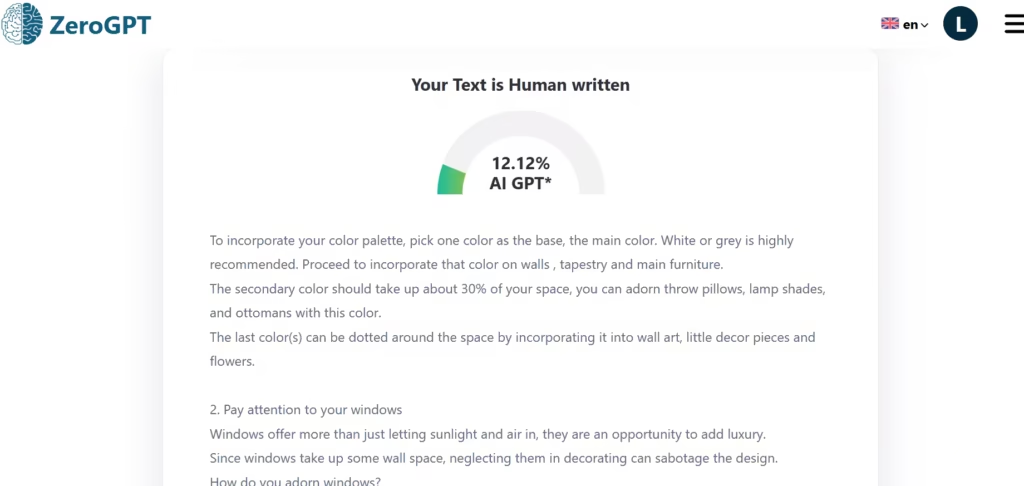

Score- 12.12%. False posiitive.

ZeroGPT reported it as human-written but still gave a score that implied that some parts were AI-generated.

3. Mixed Human + AI Text

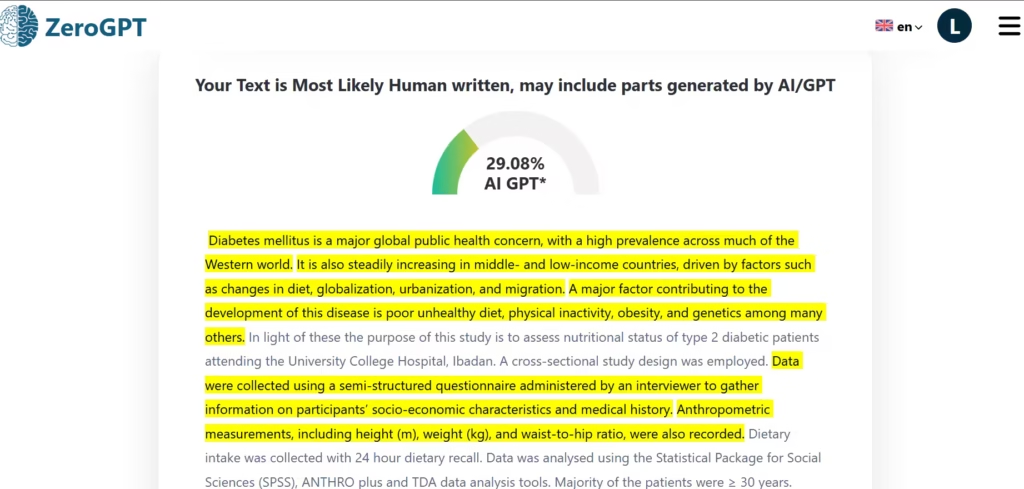

I made a sample using the text that ZeroGPT had correctly identified as human-written and then swapped some of the sentences for AI-written text.

ZeroGPT rightfully identified the AI text I had slotted in between.

Score- 29.08%. True positive.

4. Edited or Humanized AI Text

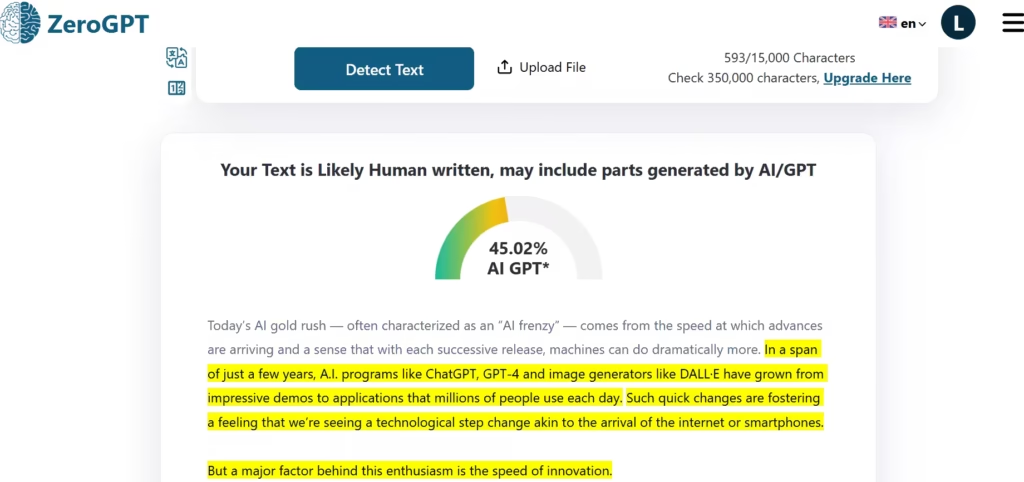

I used Humbot and Rewritify AI to humanize two text pieces ChatGPT had generated and ran tests.

ZeroGPT reported it as likely to be human-written, but with some AI elements.

Score- 45.02%. False negative.

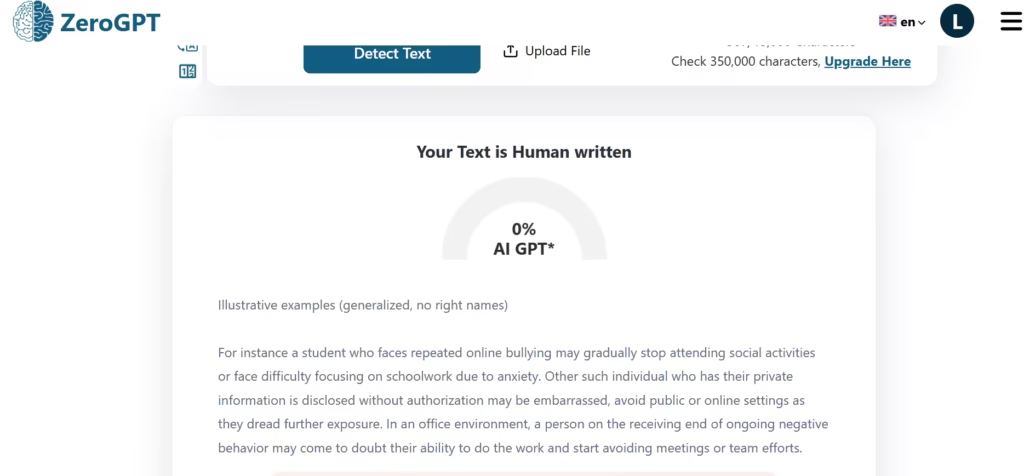

I ran the second one.

ZeroGPT reported it as fully human-written.

Score- 100%. False negative.

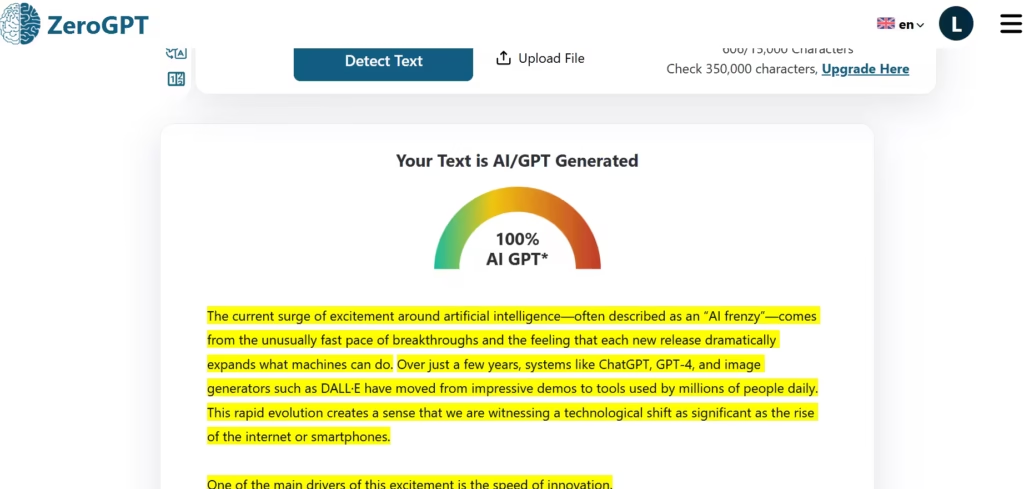

For a better perspective, I ran the original ChatGPT output through ZeroGPT for comparison. It had a score of 100% with a certain conclusion, “Your text is AI-generated.”

Score- 100%. True positive.

It’s safe to say that a good humanizer and some editing can significantly lessen the chance of detection.

False Positives Analysis

Out of the 5 tests using human-written samples, 4 were false positives.

False Negatives Analysis

Both tests AI humanized samples, came out as false negatives

True Positive

The test on an AI-generated sample came out as a true positive.

True Negative

ZeroGPT correctly identified a text as being human-written.

Patterns

- ZeroGPT was easily influenced. Humanized text samples were either undetected or scored low.

- It is also biased towards formal writing, which is marked by strict structures and writing standards.

- ZeroGPT can also penalize good writing.

Overall Accuracy Verdict

Out of all the 9 tests, ZeroGPT was only right 2 times. That’s an accuracy rate of 22.2%.