Reddit has announced that it will now require certain accounts to confirm they are human when suspicious activity is detected.

Bots have become more advanced and sophisticated. Therefore, they are harder to detect and easier to deploy at scale. Some bots serve a useful purpose; however, many are harmful.

They can spread misinformation, promote products without disclosure, and also manipulate discussions and inflate engagement.

According to Cloudflare, bot traffic could exceed human-generated traffic by 2027. This includes both simple scripts and sophisticated AI agents.

Recent events are very telling of the urgency; Digg had shut down after failing to manage bot activity effectively.

Also read: Sam Altman Says AI Is Making the Internet Feel Unreal

Verification

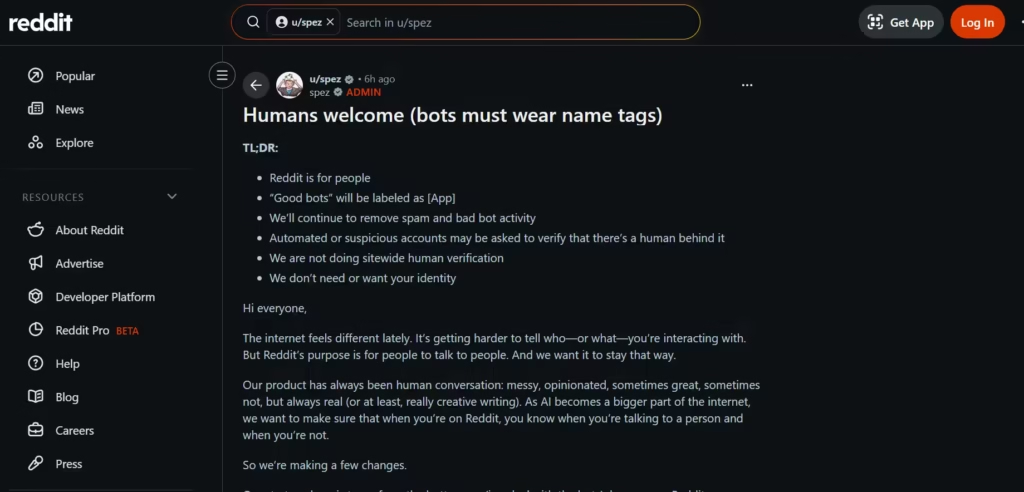

Reddit will not apply verification to all users but will target accounts that display unusual behavior.

For example, an account that posts too quickly or follows predictable patterns may be flagged. Once flagged, the user may need to complete a verification step.

To confirm human identity, Reddit will rely on tools from established providers: Apple, Google, and YubiKey.

These tools may include passkeys, biometric authentication, or security keys. In some cases, Reddit may also use systems like World ID.

Additionally, government-issued identification may be required in regions with strict age verification laws. However, Reddit states this is not its preferred approach.

Steve Huffman emphasized that privacy remains a priority. All Reddit wants is to confirm that an account belongs to a real person; it does not want to know the person’s identity.

Suspicious Accounts

Accounts that fail verification may face restrictions such as limited posting and reduced interaction capabilities.

In more serious cases, accounts may be removed entirely. Currently, Reddit removes around 100,000 accounts per day.

Most of these relate to spam or automated behavior, and the new system could increase this number over time.

“Good Bots”

Not all bots are harmful; some provide useful services to users. To protect them, Reddit will introduce labels for approved automated accounts.

Labels will help users identify bots that serve legitimate purposes and improve transparency.

Reddit allows users to generate content using AI as it remains within its policies. However, it introduces new grey areas.

The distinction between human and machine-generated content is becoming less clear. A user may rely on AI tools while still controlling the account.

Also, Reddit’s data plays a role in training AI systems. This raises concerns that bots may generate content to feed these models.

Alexis Ohanian has referenced the “dead internet theory,” a concept that suggests that automated systems could dominate online interactions.

While once speculative, it now appears increasingly plausible.

Regulatory Pressure

Regulations also influence Reddit’s decision. Several regions, including the United Kingdom and Australia, require platforms to verify user age. Some U.S. states have introduced similar rules.

Therefore, Reddit must adapt to changing legal requirements, which may necessitate stricter verification methods.