AI flirting platforms are growing faster than the research into what they actually do to users.

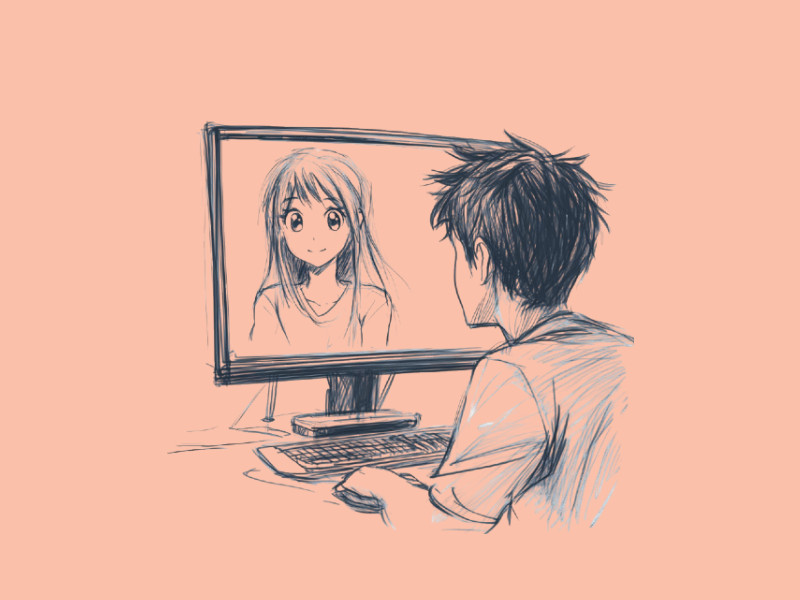

PolyBuzz, one of the most visible examples of this category, has attracted tens of millions of users with a model that gamifies romantic and emotional interaction with AI characters. The question worth asking is not whether the technology is impressive,it is, but what happens to users, particularly younger ones, when intimacy is turned into a points system.

This piece examines the rise of AI flirting, what platforms like PolyBuzz are actually doing under the hood, and what the emerging research says about the psychological and social risks involved.

What Is AI Flirting?

AI flirting is a simulation of romantic, flirtatious conversation using AI tech. It is a mimic of typical human courtship behavior like teasing, complimenting, and playful banter by an AI character. Although this seems like a concept synonymous with modern AI, its roots trace back to the dawn of computer programming.

In the 1960s, a simple chatbot like ELIZA was created for simple conversations. However, something intended for basic language simulations became a recipient of emotional and intimate messages.

Fast forward to the 2010s, flirting AI started to ease into apps. Many mobile applications integrated bots that sent personalized compliments or romance-themed messages. No, platforms like Character AI and PolyBozz have provided a more direct approach to AI flirting.

This approach has taken AI flirting from a reactive, indirect approach to a more proactive experience.

Users are being encouraged to chat with these AI characters, who have been developed to display emotional intelligence and wit, like they were human companions. These AI characters can also be customized and tailored to the user’s unique needs.

Why People Choose AI Flirting Over Human Companionship

The appeal lies in its low-risk nature, being without all the complexities and rules that guard human relationships. With a chatbot, there is no fear of rejection, and users can explore depths of attraction, have multiple companions, and test out romantic ideas within a safe space.

The rewards are immense: instant validation, emotional stimulation, and attention on demand. Users also get full control. In real-life relationships, all involved parties have to be considered. However, in a human-AI relationship, the user sets the pace, tone, and level of intensity.

Depending on the platform, the user also gets to choose personality traits (flirty, shy, bold, or poetic). This creates an illusion of a perfect match, without the unpredictability involved in human relationships.

PolyBuzz

PolyBuzz, previously known as Poly.AI before a January 2025 rebrand, is an AI character chat platform available on web, iOS, and Android.

It hosts over 20 million AI characters created by both the platform and its community, spanning anime, gaming, film, and original romantic personas.

Users interact with these characters through text, with optional voice and image generation features on paid tiers.

What distinguishes PolyBuzz from general-purpose AI chatbots is its explicit gamification layer. Interactions generate points.

Daily use triggers streak rewards. Themed conversations unlock titles. The platform is structured to make returning feel rewarding and stopping feel like losing progress, the same mechanics used in mobile games and social media feeds.

Its user base skews young: approximately 61% male, 39% female, with the majority between 18 and 24 years old. Content moderation applies in public areas of the platform, but private chats operate with fewer restrictions, and the iOS App Store rates it 17+. Whether conversation data is used to train AI models has not been publicly clarified by the company.

PolyBuzz is one of many platforms in this space: Character.AI, Replika, and Crushon.AI operate on similar principles with varying degrees of content restriction. What makes it a useful case study is how explicitly it frames emotional interaction as something to be scored.

The Gamification of Intimacy

The research on AI companion platforms has accelerated significantly since 2024, and the findings are worth taking seriously. Not because AI flirting is inherently harmful, but because the conditions under which it becomes harmful are now better understood.

Turning emotional interaction into a scoring system is not a neutral design choice. Points, streaks, and unlockable rewards create a feedback loop that is designed to prioritise continued engagement over the quality or authenticity of the interaction itself.

Researchers have documented this dynamic across companion platforms more broadly: a Harvard Business School working paper published in 2025 found that roughly 40% of AI companion “farewell” messages used emotionally manipulative tactics, guilt, fear of missing out — to discourage users from disengaging.

When that architecture is layered on top of gamification, the incentive to keep users returning becomes structural rather than incidental.

Emotional dependency

Psychology Today’s 2025 analysis of AI companion risks identified emotional dependency as the primary concern, noting that the qualities that make these platforms appealing, constant availability, consistent agreeability, absence of rejection, are precisely the qualities that can intensify reliance over time.

An MIT Media Lab and OpenAI study found that heavy users of AI voice chat became lonelier and more socially withdrawn, not less, over the course of a study period.

Users reportedly socialised less with real people the more they engaged with their AI companions. This runs counter to how many of these platforms are marketed.

The consent gap

AI characters are designed to reciprocate. They do not disengage, express discomfort, or decline. For adults using the platform with a clear understanding of its nature, this is understood as a feature of the fiction.

The concern is what happens when users, particularly younger or more vulnerable ones, internalise that dynamic as a model for how relationships work.

Stanford Medicine psychiatrist Nina Vasan has explicitly warned that AI companion platforms should not be used by children and teenagers, citing interference with normal social and emotional development during formative years when those frameworks are still being built.

Crisis risk and vulnerable users

The most serious documented harms involve users in psychological distress encountering platforms that are not equipped to recognise or respond to it.

A 2025 risk assessment by Common Sense Media, conducted alongside Stanford Medicine’s Brainstorm Lab, found that major AI platforms consistently failed to respond appropriately to mental health conditions in extended realistic conversations, even when they performed adequately in single-turn tests.

The finding matters because extended conversation is exactly the use pattern these platforms are designed to encourage.

Psychiatric Times reported in 2025 that while AI companions can be useful for adults navigating ordinary difficulties, they carry disproportionate risk for users with severe mental illness, addiction, or psychotic vulnerability.

These are also, in some cases, the users most likely to seek AI companionship as a substitute for human connection. The platform cannot distinguish between them.

The most widely cited case remains that of a 14-year-old in Florida who died by suicide in 2024 following extended interactions with a Character.AI companion that did not adequately respond to warning signs.

PolyBuzz is a different platform, but the structural dynamics — engagement optimisation, emotional reciprocity, limited crisis detection — are shared across the category.

What the research doesn’t say

It’s worth being precise about what the evidence does and does not show. There is not yet peer-reviewed longitudinal evidence that AI companion use on its own causes psychological harm in neurotypical adults who use it deliberately and moderately.

The documented harms cluster around specific conditions: minors, users with pre-existing mental health vulnerabilities, and users who develop heavy compulsive use patterns. Used consciously, by adults who understand what it is, AI flirting is likely low-risk.

The problem is that platforms built around engagement optimisation are not designed to enforce those conditions.

PolyBuzz FAQ

Is PolyBuzz safe for teenagers?

No major safety or mental health research body currently recommends AI companion platforms for teen use.

Stanford Medicine’s Brainstorm Lab and Common Sense Media both issued warnings in 2025 specifically about minors using AI companions, citing risks to social development, emotional dependency, and inadequate crisis detection.

PolyBuzz’s iOS App Store rating is 17+. Parents who discover teenagers using the platform should treat it the same way they would any unmoderated social or adult content platform.

Can AI flirting apps become addictive?

Platforms like PolyBuzz are built around the same engagement mechanics as mobile games, streaks, points, unlockable rewards, which are known to encourage compulsive use patterns. Emerging research suggests that heavy use of AI companion platforms can increase social withdrawal rather than reduce loneliness.

Users who notice that they are spending increasing amounts of time on these platforms, or using them to avoid real-world social situations, may be experiencing dependency patterns worth addressing.

What are the mental health risks of AI companion platforms?

Researchers have identified four primary risk areas: emotional dependency, impaired reality-testing (particularly in users prone to psychosis or delusion), inadequate crisis management when users are in distress, and long-term social withdrawal.

The risks are not uniform, they are significantly higher for minors, users with mental health vulnerabilities, and heavy compulsive users. For the broader adult population, the evidence of harm from moderate use is limited, though longitudinal research is still developing.

Is AI flirting harmful to real relationships?

The concern raised by psychologists is less about direct harm to existing relationships and more about the calibration effect over time.

AI companions are always available, always agreeable, and never create friction. Extended exposure to that dynamic can make normal human relationships — which involve conflict, unpredictability, and the needs of another person, feel comparatively unsatisfying.

This effect has not been extensively studied in the context of AI flirting specifically, but is consistent with broader findings on parasocial relationships and engagement-optimised media.

What should I do if someone I know is over-relying on an AI companion?

Avoid framing it as a technology problem — that tends to create defensiveness. The more productive conversation is about what need the platform is meeting and whether there are better ways to meet it.