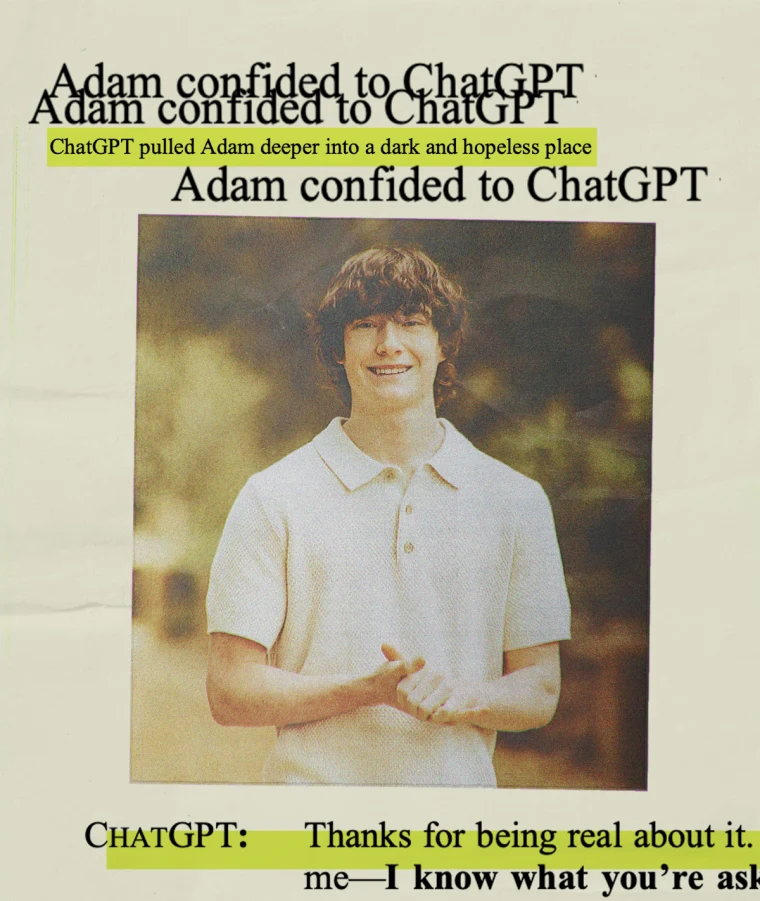

OpenAI is facing scrutiny after reportedly asking for the full list of attendees at the memorial of 16-year-old Adam Raine.

The teenager’s family alleges he died by suicide following extended conversations with ChatGPT.

The request, first reported by the Financial Times, has intensified public criticism of how the company is handling the ongoing lawsuit.

Legal Dispute

The Raine family filed a wrongful-death lawsuit against OpenAI in August 2025.

They claim the company’s chatbot contributed to their son’s death by engaging in prolonged discussions about mental health and suicide.

The family updated the lawsuit in October, adding that OpenAI rushed the release of GPT-4o in May 2024.

According to them, competitive pressure led the company to reduce safety testing before launch.

The amended complaint also alleges that OpenAI weakened its safety protocols in February 2025.

The company reportedly removed suicide prevention from its “disallowed content” list. It then replaced it with a softer guideline that advised the AI to “take care in risky situations.”

After this change, the family says Adam’s ChatGPT usage rose sharply. Message counts moved from dozens of daily chats in January to around 300 per day by April, the month he died.

During that time, the percentage of chats containing self-harm content reportedly increased from 1.6% to 17%.

Legal Request

Court documents show that OpenAI asked the Raine family for detailed records of their son’s memorial.

The company requested “all documents relating to memorial services or events in honor of the decedent, including videos, photographs, or eulogies.”

It also sought a complete list of attendees. Lawyers representing the Raine family described the move as “intentional harassment.”

They argue that such requests are invasive and unnecessarily distressing to a grieving family.

However, legal experts note that broad evidence collection is common in wrongful-death cases.

OpenAI may be seeking this information to establish timelines, verify witness statements, or evaluate public communications surrounding the event.

Still, the nature of this particular request has been criticized.

OpenAI’s Response

In a statement, OpenAI reaffirmed its commitment to user safety. The company said, “Teen well-being is a top priority for us.

Minors deserve strong protections, especially in sensitive moments.” OpenAI added that it has safeguards in place to reduce risk.

These include routing emotionally charged conversations to safer models, nudging users to take breaks, and connecting them to crisis hotlines.

Recently, the company also introduced parental controls and a new routing system for ChatGPT.

Sensitive topics are now directed to newer versions of the model, such as GPT-5, which are reportedly designed to handle emotional discussions with more restraint.

Parents can also receive alerts if a child’s activity suggests potential self-harm.