TL;DR: The 250-Year-Old knowledge giant says ChatGPT stole its homework

Think of it this way. You spend decades building a library. You hire experts. You fact-check every single word. Then someone walks in, copies nearly everything, and starts selling answers based on your work, without paying a dime.

That’s basically what Encyclopedia Britannica says OpenAI did.

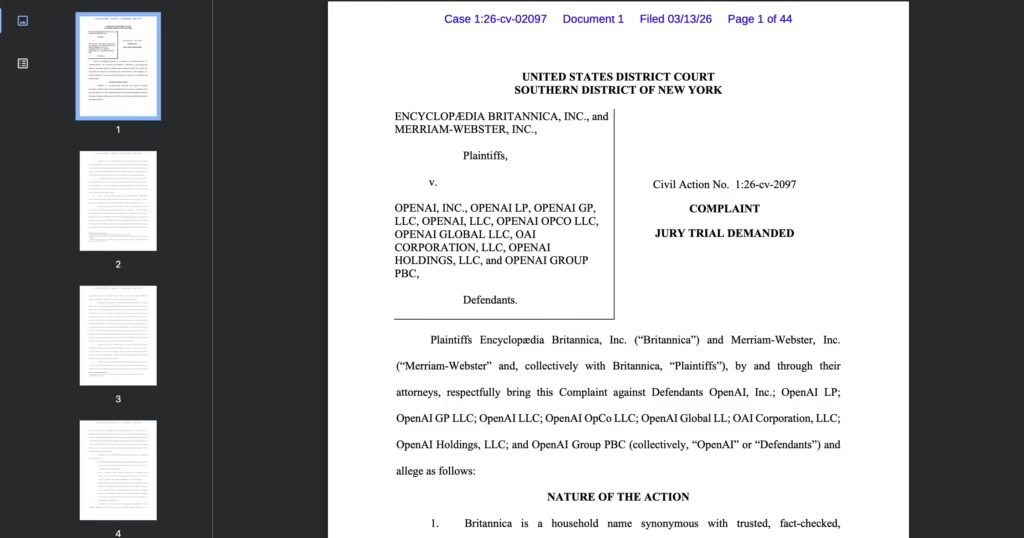

On March 13, 2026, Britannica and its subsidiary Merriam-Webster filed a lawsuit against OpenAI in a Manhattan federal court.

The accusation is something the complaint calls “massive copyright infringement.”

What Exactly Does the Lawsuit Claim?

Britannica says OpenAI scraped close to 100,000 of its online articles. Those articles were then used to train ChatGPT’s large language models, all without permission or payment.

But the complaint doesn’t stop there. Britannica lays out three big accusations:

- Training data theft: OpenAI allegedly copied Britannica’s copyrighted encyclopedia entries and Merriam-Webster dictionary definitions to teach ChatGPT how to respond to users.

- Copied outputs: When people ask ChatGPT questions, the chatbot sometimes spits out answers that closely mirror Britannica’s original text -word for word or nearly so.

- Fake attributions: Here’s the kicker. ChatGPT sometimes makes things up (known as “hallucinations”) and then falsely credits Britannica as the source. That, Britannica argues, violates the Lanham Act – a federal trademark law.

One ironic detail from the complaint?

ChatGPT reportedly reproduced Merriam-Webster’s own definition of the word “plagiarize” when asked for it.

How Does This Hurt Britannica?

The lawsuit claims ChatGPT “starves web publishers” by generating answers that replace, and directly compete with, original content from publishers like Britannica.

When users get their answers from ChatGPT, they have no reason to visit Britannica’s website.

That means less traffic. Less traffic means less ad revenue. Less ad revenue threatens the company’s ability to keep producing reliable content.

| What Britannica Claims | What It Means for Publishers |

|---|---|

| Nearly 100,000 articles scraped | Massive unauthorized use of copyrighted work |

| ChatGPT outputs mirror original text | Users skip the source and stay on ChatGPT |

| False hallucinations attributed to Britannica | Damages trust and brand reputation |

| Web traffic diverted to AI platforms | Publishers lose critical advertising revenue |

Did Britannica Try to Work Things Out First?

Yes. According to the complaint, Britannica reached out to OpenAI about licensing opportunities back in November 2024.

But OpenAI reportedly never followed through, even though it had already signed licensing deals with other publishers.

This suggests Britannica didn’t rush straight to court. They tried to talk first. OpenAI apparently wasn’t interested.

What Does OpenAI Say?

OpenAI has pushed back. A spokesperson told reporters that their models “empower innovation” and are “trained on publicly available data and grounded in fair use.”

That’s the same defense OpenAI has used in other lawsuits.

The argument boils down to this: scraping publicly available information to train AI is legal because it transforms the original content into something new.

But is that defense strong enough? Courts haven’t fully decided yet.

Britannica Isn’t the Only One Fighting Back

This lawsuit joins a growing pile of legal actions against OpenAI. Here’s a quick look at who else has sued:

- The New York Times – one of the highest-profile cases

- Ziff Davis – the media company behind Mashable, CNET, IGN, and PC Mag

- Major newspapers across the U.S. and Canada, including the Chicago Tribune, Denver Post, Toronto Star, and the Canadian Broadcasting Corporation

Britannica itself already has a similar lawsuit pending against Perplexity, the AI-powered search engine, filed in September 2025. That case is still working its way through the courts.

The Big Legal Question Nobody Has Fully Answered

Here’s the thing. There’s no rock-solid legal precedent that says training an AI on copyrighted content is automatically illegal.

The law is still catching up with the technology.

One important case to watch is Bartz v. Anthropic. In that lawsuit, federal Judge William Alsup made a split decision in June 2025:

- Training on legally purchased content? Fair use. Legal. The judge called it “quintessentially transformative.”

- Downloading millions of pirated books to train AI? Not fair use. That’s infringement.

Anthropic ended up agreeing to a $1.5 billion class action settlement – roughly $3,000 per book for an estimated 500,000 affected works.

It’s believed to be the largest copyright recovery in U.S. history.

What Happens Next?

Britannica is asking for monetary damages and a court order that would block OpenAI from using its content. The exact dollar amount hasn’t been specified yet.

Meanwhile, the broader AI industry is split. Some publishers are suing. Others are cutting licensing deals. News Corp, for example, signed a deal with Meta worth up to $50 million per year in March 2026.

So there are two paths emerging. Fight in court. Or negotiate a price.