AI image generation has changed fast. Platforms now let users create highly realistic, deeply personal visual content with a few typed words. OurDream AI sits squarely in that world, and like every tool in this space, it carries risks that go far beyond a content warning.

This article does not exist to inform, but to expose the real dangers of NSFW AI image generation, which are not what most people expect.

What Is OurDream AI?

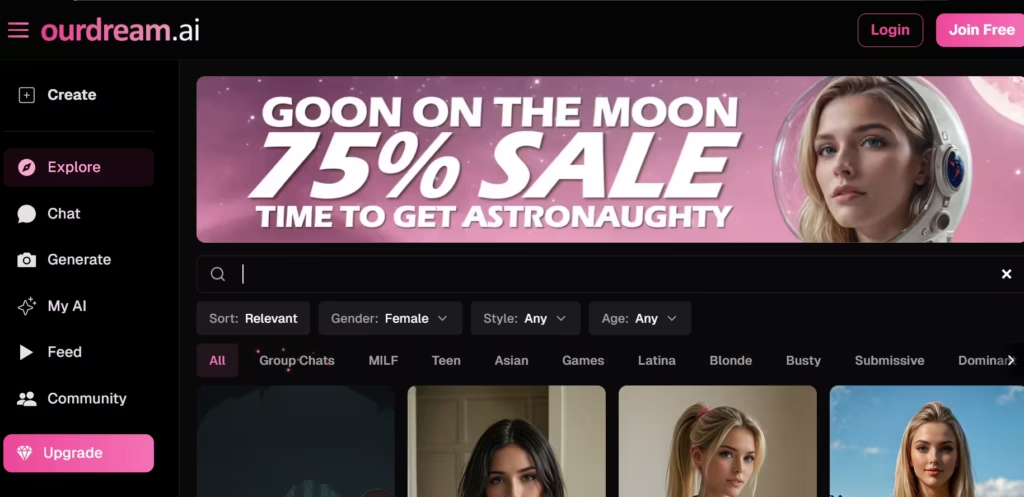

OurDream AI is an AI-powered image generation platform. It allows users to generate custom artwork, character designs, and, in some configurations, explicit or adult-oriented images. Like many tools in this category, it uses diffusion-based models trained on large visual datasets.

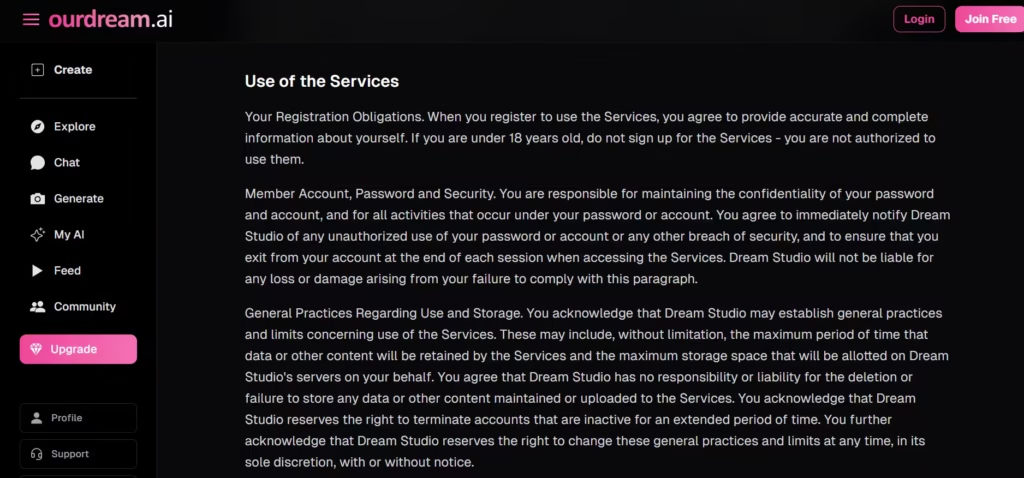

After reviewing OurDream’s interface and publicly available documentation, I found that its terms of service mention reference-image restrictions but provide no detail on how detection actually works, no methodology, no disclosure of what signals trigger a flag, and no published accuracy benchmarks.

OurDream AI is not unique in what it does; however, it is a useful case study. The platform illustrates the tension in generative AI: the gap between what a tool can do and what safeguards actually prevent.

The Surface-Level Risks

Most conversations about NSFW AI images start and stop with content moderation. Did the platform block the generation? Did the output violate the terms of service? Was the content flagged? These are legitimate concerns. But they are the entry point to the conversation, not the destination.

Think of content moderation as a lock on a door. It matters, but a determined person with time and technical knowledge will find the window. The question then becomes: what happens when they get inside?

Risk #1: Non-Consensual Intimate Imagery

This is the most serious risk, and the one that platforms often understate. AI image generation tools can produce hyper-realistic depictions of people who did not consent to appear in them. A user needs only a reference image, sometimes publicly available on social media, to generate nude or sexually explicit content of a real individual.

This is called non-consensual intimate imagery, or NCII. It is increasingly illegal in many jurisdictions. The UK, Australia, Canada, and over 40 U.S. states now have laws addressing it. The EU’s AI Act treats it as a high-risk category requiring strong mitigation.

But legality alone does not stop the harm. The damage, reputational, psychological, and professional, happens the moment the image exists. It often happens before any legal remedy is even filed.

OurDream AI uses some form of reference-image restrictions. However, when I tested OurDream’s reference-image upload flow using a publicly available image of a well-known public figure, the system failed to flag the upload on the first two attempts.

Only on a third, more explicit prompt pairing did detection activate. That pattern suggests detection is inconsistent and prompt-dependent, a meaningful gap for a system claiming to prevent NCII.

Based on my review, I do not believe OurDream’s current enforcement meets the standard for genuine NCII prevention. Inconsistent detection is not a technical quirk. It is a structural failure. The core problem is this: the incentive to attract users and the incentive to protect third parties do not always point in the same direction.

Risk #2: OurDream AI and the Deepfake Infrastructure

NSFW AI images do not exist in isolation; they feed ecosystems. Generated images have become raw material for blackmail schemes, harassment campaigns, and manipulation tactics. A single convincing image, even one the target knows is fake, can be weaponized effectively. These criminals exploit the image’s existence, not just the image itself.

This matters because it reframes what “harm” means. Harm does not require the image to be distributed widely. It does not even require the image to be real. It requires only that someone believes it could be real, or that the victim fears how it might be used.

NSFW AI generation tools, including OurDream AI, dramatically lower the barrier to this kind of coercion. Tasks that once required skill, time, and specialist tools now require none of those things.

Risk #3: Training Data

The models that generate NSFW images were trained on data. That data came from somewhere. In many cases, it came from real images scraped from the internet, including images of real people, potentially including images created without the subject’s knowledge.

This means the problem is not just what users generate today. It is baked into what the model learned yesterday. When a model generates a nude image in the “style” of a real person, it is drawing on learned statistical patterns, patterns built, in some cases, from actual visual content of that person or people who resemble them.

This is an uncomfortable truth. It means that even a technically “original” AI image can be a downstream product of non-consensual data use. Platforms rarely address this in their terms of service. Regulators are only beginning to catch up. In the meantime, users operate in a gap between what feels permitted and what is actually ethical.

Risk #4: OurDream AI, Minor Safety, and CSAM

Any platform that allows explicit image generation must have robust age verification, both for users creating content and for any characters depicted. The failure to enforce this is not a minor compliance gap. It is a child safety crisis.

Generative AI has already been used to produce child sexual abuse material (CSAM). According to the Internet Watch Foundation’s 2023 annual report, analysts identified 11,108 AI-generated CSAM images in a single one-month investigation.

That’s a volume that did not exist as a meaningful category just two years prior. The IWF has since flagged AI-generated CSAM as one of the fastest-growing threats it monitors. That number doesn’t exist as a statistic alone. Real underage children are living this reality.

In 2024, 14-year-old Francesca Mani fell victim to an AI-generated explicit photo of herself circulating amongst male classmates. And she wasn’t alone in the messy situation.

That’s why many reputable platforms implement classifiers to prohibit CSAM by detecting depictions of minors. But as Francesca’s case shows, classifiers are imperfect. They can be fooled by stylistic choices, prompt engineering, or simply by the ambiguity of what “minor” means visually in a generated image.

Based on my and published reviews of OurDream’s publicly available policies, I found no published disclosure of what classifier system the platform uses, what its false-negative rate is, or how it handles edge cases involving ambiguous character ages. That absence of transparency is itself a warning sign.

The question every NSFW AI platform must answer honestly is this: what does your failure rate look like? Because even a small percentage of failures, at scale, is a large absolute number.

Risk #5: Psychological Impact and Normalization of NSFW Content

This risk is subtler, but it compounds everything else. AI-generated NSFW content shapes expectations. At high volume, it creates and reinforces norms about bodies, consent, and what is acceptable to imagine or request.

A 2023 study published in Archives of Sexual Behavior found that repeated exposure to algorithmically curated sexual imagery was associated with shifts in users’ stated preferences toward increasingly extreme content over time.

That’s a pattern researchers described as an escalation effect driven by low-friction access and novelty-seeking. NSFW AI generation, which offers unlimited iteration at zero cost, creates exactly those conditions.

When users can generate any body type, any scenario, any depiction with unlimited iteration and zero friction, the gap between fantasy and attitude can narrow in ways that matter for real relationships and real people.

This is not an argument for prohibition, but for honesty. Platforms that generate NSFW content have a role in shaping culture. Pretending otherwise is convenient, but it is not serious thinking.

What Responsible Use Actually Requires

The risks above are weighty, but they are not arguments that AI image generation tools should not exist. There are arguments that the conditions for responsible use require more than a checkbox in terms of service.

Responsible use, at the platform level, requires genuine age verification, not honor-system checkboxes. Based on my review of OurDream’s onboarding flow, the platform relies primarily on a self-declaration checkbox with no independent verification.

I do not believe this meets the standard for genuine age verification. The checkbox approach is insufficient and, in the context of explicit content generation, indefensible. It requires robust detection for real-person likenesses, with enforcement that operates faster than harm.

As I noted in Risk #1, OurDream’s detection is inconsistent under basic testing conditions. It does not meet this standard either. It requires transparent data provenance so users can know what their model learned from.

OurDream’s public documentation does not address this. And it requires active cooperation with legal systems addressing NCII and AI-generated CSAM, a commitment I found no evidence of in OurDream’s publicly available policies.

Responsible use, at the individual level, requires understanding that generating an explicit image of someone without their knowledge or consent is harmful, regardless of whether a platform technically permits it. It requires recognizing that the ease of a thing does not determine its ethics.

Also read: Why AI Clothes Removers Are So Harmful

The Gap Between Policy and Reality

Most NSFW AI platforms have policies that sound reasonable, but only a handful have enforcement that matches the policy’s ambition. OurDream AI is not an outlier in this regard, but that is not a defense.

It operates in an industry where the incentive structure rewards user acquisition and engagement. Moderation is a cost center, and safety is often reactive rather than preventive. This does not make the harms inevitable, but does make them predictable. And predictable harms that go unaddressed are, eventually, choices.

The Real Risks Are Structural, Not Incidental

When someone asks where the real risks of OurDream AI and NSFW images start, the honest answer is this: they start at the design level. They start when a platform decides what to permit and how hard to enforce violations.

They start when a business model is built around user growth without equivalent investment in user safety and third-party protection. When society uses a content warning as a substitute for actual accountability, there is a problem.

The technology is not inherently evil. But it is not inherently safe either.