A year after shaking up Silicon Valley, China’s scrappy AI startup is back with a bold new model. And this time, it doesn’t need Nvidia.

The Model That Has Everyone Paying Attention Again

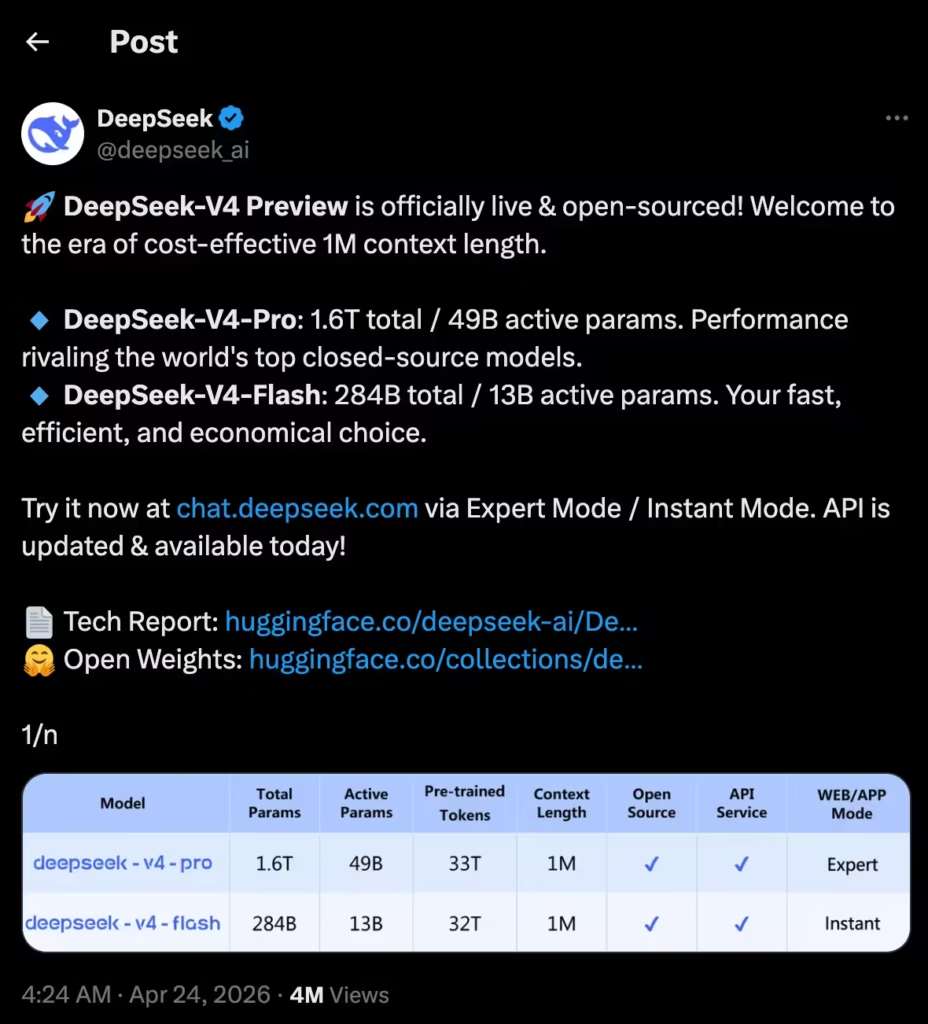

DeepSeek released a preview of its next-generation AI model, V4, on Friday, April 24, 2026.

The Hangzhou-based startup says it can go head-to-head with closed-source systems from OpenAI, Google, and Anthropic – the biggest names in the American AI industry.

The model comes in two versions.

DeepSeek-V4-Pro packs 1.6 trillion total parameters with 49 billion active. V4-Flash is the leaner option at 284 billion total parameters and 13 billion active.

Both support a massive one-million-token context window, meaning they can process and remember far more information in a single conversation than most competitors.

And just like previous DeepSeek releases, V4 is fully open-source. Anyone can download it, run it, and modify it.

Why Coding and AI Agents Are the Big Deal

DeepSeek is making noise about V4’s coding abilities.

The company claims the model leads all current open-source models in coding, math, and STEM benchmarks. That matters a lot right now because coding is the engine behind AI agents – tools that can act on your behalf.

V4 already works with popular agent tools like Anthropic’s Claude Code and OpenClaw. DeepSeek says it even uses V4 internally for its own agentic coding work.

In a market where companies are racing to build AI that doesn’t just chat but actually does things, strong coding performance is a serious advantage.

The Huawei Connection Changes the Game

Here’s the part that carries geopolitical weight.

DeepSeek built V4 to run on domestic Chinese chips, specifically Huawei’s Ascend 950 processors.

Huawei confirmed it supports DeepSeek with its “Supernode” technology, which clusters large groups of Ascend chips to deliver the computing power needed for training massive AI models.

This is a major shift. DeepSeek’s earlier R1 model relied on Nvidia hardware.

But U.S. export controls have cut off access to the most advanced American chips. So Chinese developers have had no choice but to work with homegrown alternatives.

According to Wei Sun, an analyst at Counterpoint Research, V4’s reliance on Huawei and Cambricon chips could make it more impactful than R1 in the long run.

If AI systems can perform at a high level without Nvidia, it weakens the leverage of U.S. chip restrictions.

It also opens the door for faster AI adoption in countries that face similar export barriers.

A Year After the R1 Shock

DeepSeek first grabbed global headlines in early 2025 with its R1 reasoning model.

R1 stunned the industry because it delivered impressive performance at a fraction of the training cost of comparable American systems.

Tech stocks tumbled. Assumptions about how much money you needed to build top-tier AI got flipped upside down.

V4 arrives with similarly high ambitions but also some unanswered questions.

DeepSeek hasn’t disclosed what V4 cost to train. U.S. officials have previously accused the company of using banned Nvidia chips. Anthropic has also claimed that DeepSeek used Claude’s outputs to improve its own models.

DeepSeek hasn’t directly addressed these allegations with V4.

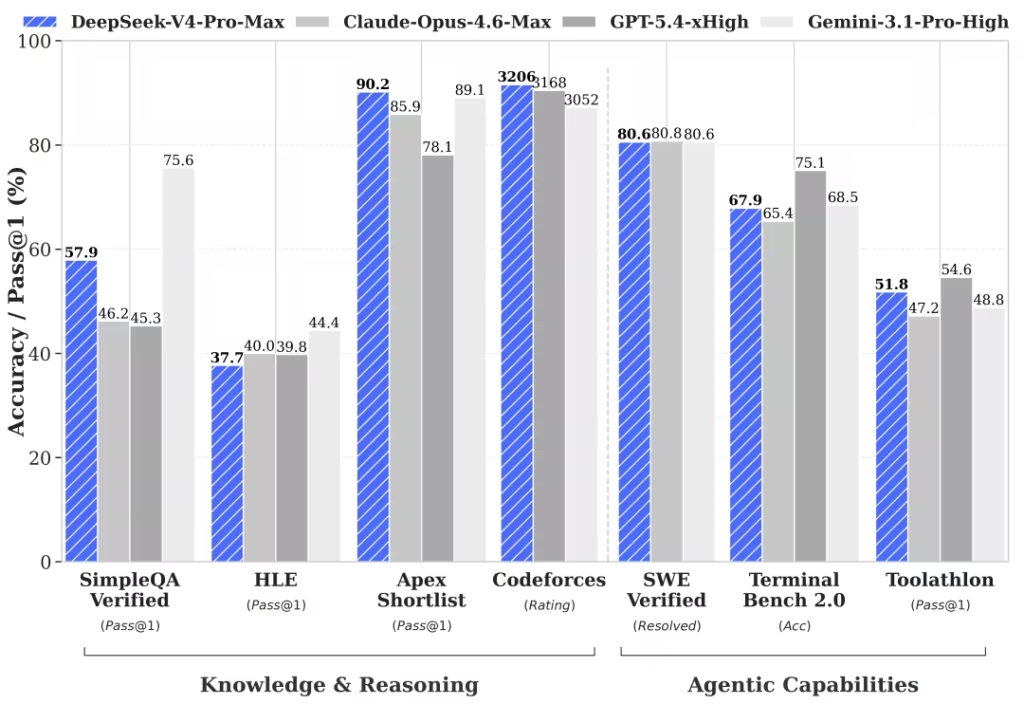

How V4 Stacks Up on Benchmarks

The company shared benchmark results showing V4-Pro outperforms all open-source competitors in world knowledge, trailing only Google’s closed-source Gemini 3.1 Pro.

On reasoning tasks, DeepSeek says V4 rivals the best closed-source models available anywhere.

V4 also introduces a new “Hybrid Attention Architecture” that makes long-context processing far more efficient. In a one-million-token setting, V4-Pro uses just 27% of the computing power and 10% of the memory that the previous V3.2 model needed.

That’s a massive efficiency gain – and it feeds directly into the cost story that made DeepSeek famous in the first place.

What Comes Next

V4 is labeled a “preview,” so refinements are likely coming.

DeepSeek has also announced that its older models (deepseek-chat and deepseek-reasoner) will be fully retired by July 24, 2026, with traffic already routing through V4-Flash.

For now, the AI race between the U.S. and China just got another jolt. DeepSeek keeps proving that you don’t need the biggest budget or the fanciest chips to build competitive AI.

Whether that’s inspiring or alarming probably depends on which side of the Pacific you’re sitting on.