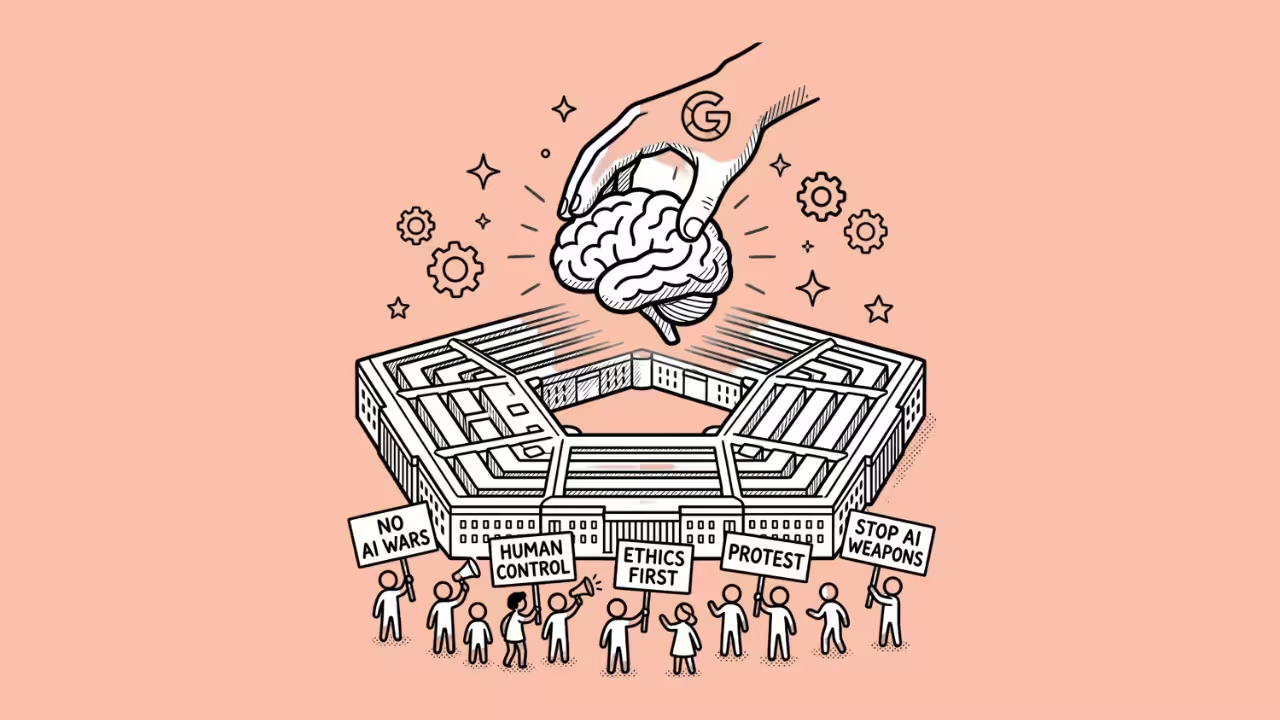

Over 560 Google workers signed a letter pleading with their CEO to say no.

He said yes anyway.

The Deal Nobody Was Supposed to See

Google has signed a classified agreement with the U.S. Department of Defense that lets the Pentagon use its AI models for “any lawful government purpose.”

The Information broke the story on Tuesday, April 28, 2026, citing a single source familiar with the deal.

The timing couldn’t be more awkward.

Just one day earlier, more than 560 Google employees sent an open letter to CEO Sundar Pichai urging him to block the Pentagon from accessing Google’s AI for classified work. They warned the technology could be used in “inhumane or extremely harmful ways.”

Pichai clearly had other plans.

What the Contract Actually Says

According to The Information’s report, the deal includes language stating that Google’s AI shouldn’t be used for domestic mass surveillance or autonomous weapons without “appropriate human oversight and control.”

That sounds reassuring on paper.

But here’s the catch. The contract also states that Google has no “right to control or veto lawful government operational decision-making.”

In other words, those restrictions aren’t enforceable. Google can’t actually stop the Pentagon from doing anything it considers legal.

The deal also requires Google to help adjust its AI safety settings and filters whenever the government asks. A Google spokesperson told Reuters the agreement is an amendment to an existing government contract. The company called it a “responsible approach to supporting national security.”

Google Joins a Growing Club

This deal puts Google alongside OpenAI and Elon Musk’s xAI, both of which have their own classified AI agreements with the Pentagon.

According to Reuters, the Pentagon signed deals worth up to $200 million each with major AI labs in 2025.

Classified networks handle some of the military’s most sensitive work. T

hat includes mission planning and weapons targeting. The Pentagon has said it doesn’t intend to use AI for mass surveillance of Americans or for weapons without human involvement.

But it wants full flexibility to use AI for any lawful purpose, and it’s not willing to let tech companies draw those lines.

What Happened to Anthropic

One major AI company tried to push back.

Anthropic, the maker of Claude, insisted on keeping guardrails that prevented its models from being used for fully autonomous weapons or domestic mass surveillance.

The Pentagon didn’t like that.

In late February 2026, Defense Secretary Pete Hegseth declared Anthropic a “supply chain risk.” President Trump followed with a directive ordering federal agencies to stop using Anthropic’s technology. It was the first time an American company received that designation — a label historically reserved for foreign adversaries.

Anthropic sued. A federal judge in San Francisco granted a preliminary injunction blocking the broader government ban. But a D.C. appeals court let the Pentagon’s supply chain designation stand, meaning the company remains locked out of new defense contracts.

The Message Is Clear

The Anthropic situation sends a loud signal to every AI company: play ball with the Pentagon or face consequences.

Google, OpenAI, and xAI all signed deals that give the military broad access. Anthropic drew a line, and got blacklisted for it.

As one Council on Foreign Relations expert told CNBC, the dispute “feels like it is about politics and personalities” masquerading as a policy disagreement.

Google Employees Aren’t Staying Quiet

The internal letter from Google workers didn’t mince words.

Employees wrote that their “proximity to this technology creates a responsibility to highlight and prevent its most unethical and dangerous uses.”

They specifically cited lethal autonomous weapons and mass surveillance as their biggest fears.

The letter also warned that “making the wrong call right now would cause irreparable damage to Google’s reputation, business and role in the world.”

This isn’t the first time Google employees have pushed back on military work. In 2018, thousands protested Project Maven, a Pentagon contract for AI-powered drone surveillance. Google eventually pulled out of that deal. This time, the company chose differently.

The Bigger Picture

There’s a real tension at the heart of this story.

The U.S. government wants unrestricted access to the most powerful AI systems on the planet. Tech companies want those lucrative contracts.

And the guardrails that are supposed to prevent misuse? They’re written into contracts that the government doesn’t have to follow.

Meanwhile, the White House is quietly negotiating with Anthropic about accessing its newest model, Mythos, for civilian agencies, even as the Pentagon keeps the company blacklisted.

The contradictions are hard to miss.

Whether you see this as a necessary step for national security or a troubling erosion of AI safety principles probably depends on how much trust you place in the phrase “any lawful government purpose.”