X, formerly Twitter, has announced a new enforcement policy concerning creators who do not label conflict videos as AI-generated.

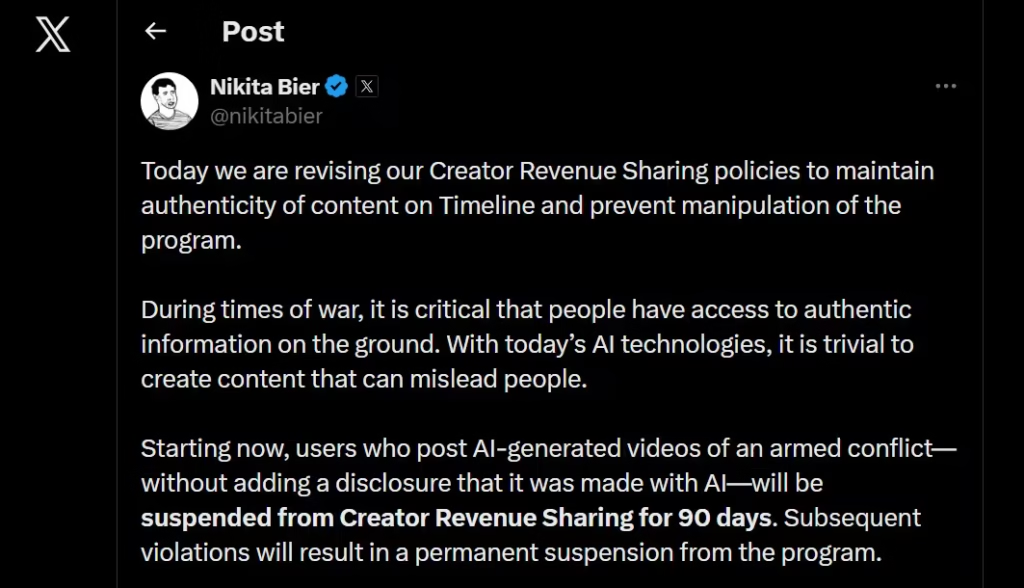

This was shared by Nikita Bier, the company’s head of product. He stated that users who post AI-generated war videos without disclosure will face penalties.

These penalties directly affect earnings. Specifically, they apply to X’s Creator Revenue Sharing Program.

Bier emphasized that during war, people need access to real information. However, AI tools now make it easy to create highly convincing but false content.

New Rule

The policy states that creators must disclose when content is generated using AI. If they fail to do so, there will be a 90-day suspension from the Creator Revenue Sharing Program.

There will also be a permanent ban from the program for repeat violations. This means creators can still post AI content, but they must be transparent. Otherwise, they risk losing income.

Why Now?

Today, almost anyone can create realistic war footage in minutes. This creates a serious problem as misleading videos can spread quickly.

They can shape public opinion, and in some cases, influence how people respond to real-world events.

Armed conflict content carries emotional weight. That’s why it demands accuracy.

To enforce the rule, X will rely on detection tools that identify generative AI content, Community Notes, and internal moderation systems.