The U.S. military wants to become an “AI-first fighting force.”

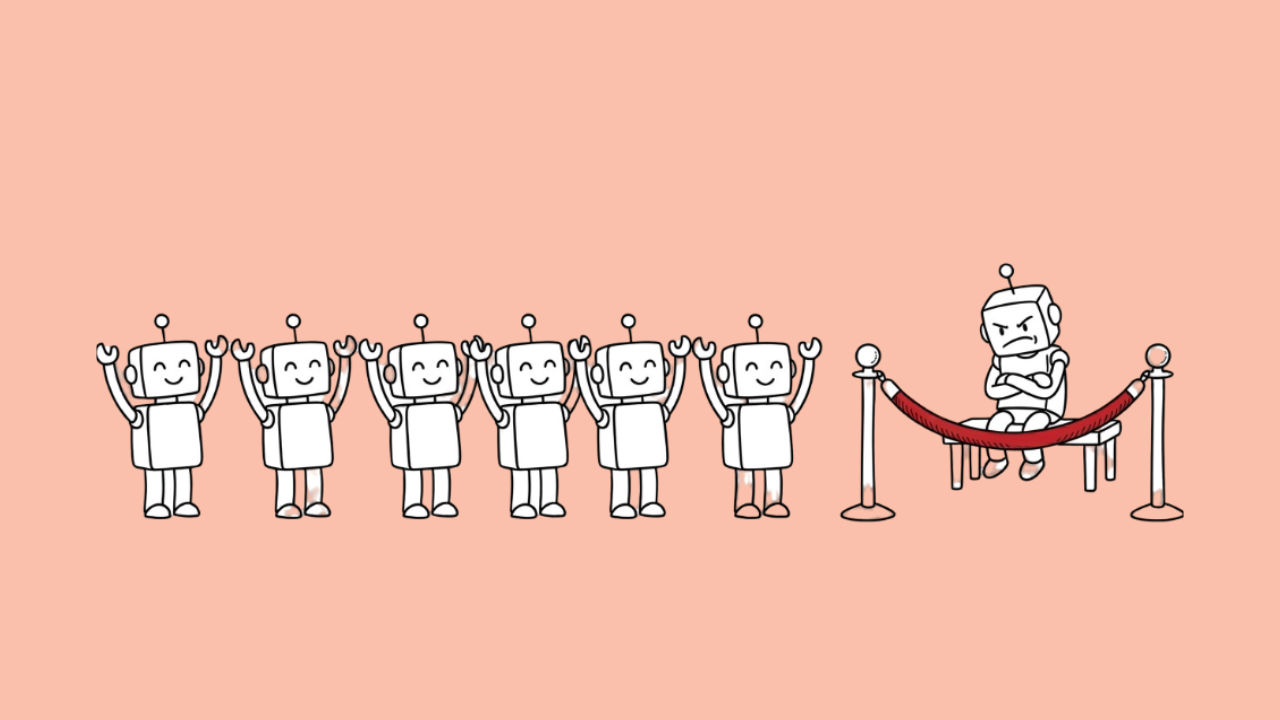

It just picked its team, and the one company that said no to removing safety guardrails got left out.

Seven Companies, One Big Announcement

On Friday, May 1, 2026, the Department of Defense announced agreements with seven major tech companies to deploy AI on its most secure classified networks.

The list includes OpenAI, Google, Microsoft, Amazon Web Services, Nvidia, Elon Musk’s SpaceX, and a newer startup called Reflection, which is backed by Nvidia.

Oracle was added to the list just hours later, bringing the total to eight.

These deals cover what the Pentagon calls “Impact Level 6 and 7” environments – the military’s most sensitive classified networks. That’s where mission planning, intelligence analysis, and weapons targeting happen.

The Pentagon’s statement made its goals clear.

It wants these agreements to help build “an AI-first fighting force” that gives American troops “decision superiority across all domains of warfare.”

Who’s In – and Who’s Not

Some of these partnerships aren’t new.

OpenAI and xAI already had existing classified deals with the Pentagon.

Microsoft and Amazon have deep, longstanding relationships with the Defense Department through cloud services. Google’s classified deal was reported just days ago.

The new additions are Nvidia and Reflection. SpaceX’s inclusion is also notable – Musk’s companies now hold multiple classified government contracts.

But the biggest story is who’s missing: Anthropic.

Why Anthropic Got Left Behind

Anthropic’s Claude was actually the first AI model deployed on the Pentagon’s classified networks.

The company signed a $200 million contract with the Defense Department last year and was deeply embedded in military operations through Palantir’s Maven toolkit.

Then things fell apart.

The Pentagon demanded that all AI companies allow their models to be used for “any lawful purpose.” Anthropic pushed back.

It wanted guarantees that Claude wouldn’t be used for fully autonomous weapons or domestic mass surveillance. The Defense Department saw those limits as unacceptable.

In late February 2026, Defense Secretary Pete Hegseth declared Anthropic a “supply chain risk” – a label previously reserved for foreign adversaries. President Trump followed with a directive to cut ties with the company entirely.

Anthropic Fought Back in Court

Anthropic didn’t go quietly.

It sued the federal government, arguing the blacklisting was unconstitutional retaliation.

A federal judge in San Francisco agreed to block the broader government ban.

But a D.C. appeals court let the Pentagon’s supply chain designation stand, keeping the company locked out of defense contracts.

The legal battle continues.

The Pentagon’s CTO Takes a Shot

Pentagon CTO Emil Michael didn’t hold back on Friday.

In a CNBC interview, he took a thinly veiled swipe at Anthropic.

He said the military learned it was “irresponsible to be reliant on any one partner” – especially one that didn’t want to work with the Pentagon on its terms.

But Michael also acknowledged Anthropic’s newest model, Mythos, as a “separate national security moment.”

He admitted the military needs to evaluate its capabilities for finding cyber vulnerabilities, even as the company remains blacklisted.

That contradiction is hard to ignore.

Reports suggest the NSA is already quietly using Mythos, and the White House has been in active discussions with Anthropic about deploying it across civilian agencies.

What This Means for AI Safety

This wave of deals sends a clear message. The Pentagon wants AI without restrictions. Companies that agree get massive contracts. Companies that don’t get punished.

Every other major AI lab has now accepted the Pentagon’s “any lawful purpose” language. Only Anthropic drew a line, and it paid for it. Whether you see that as principled or foolish depends on your perspective. But the precedent is set.

CNN noted that signing so many of Anthropic’s competitors could give the Trump administration leverage in its ongoing dispute with the company.

Anthropic is losing revenue that its rivals now have access to.

And last year’s One Big Beautiful Bill Act included significant funding for the Pentagon to spend on AI and offensive cyber operations.

The Speed of It All

Here’s another detail worth noting. Getting new software onto top-secret military networks used to take up to 18 months. The Pentagon has now cut that timeline to under three months.

That speed reflects how seriously the military is taking the AI arms race – both with foreign adversaries and within its own tech supply chain.

The Pentagon also stressed it wants to avoid “vendor lock-in.” With eight companies now on board, the military has options. If one company causes problems or falls behind, there are seven others waiting in line.

The Question Nobody Can Dodge

AI is moving into the most sensitive corners of American military power.

The companies building it have agreed to let the government use it however it sees fit – as long as it’s “lawful.”

The only company that tried to define what that word shouldn’t mean got blacklisted and dragged into court.

That’s the reality of AI in 2026. The technology is too powerful and too valuable for either side to walk away.

The only question left is who gets to decide where the lines are drawn – the people who build it, or the people who wield it.