Generative AI is no longer just a user-facing productivity tool. It is now embedded into internal automation pipelines, customer workflows, development environments, and autonomous agents.

AI agents can read databases, call APIs, modify configurations, and trigger external services. That power introduces a new security surface:

- Prompt injection

- Data exfiltration through LLM interactions

- Agent manipulation

- API abuse

- Model misconfiguration

- Over-privileged non-human identities

In 2026, Gen AI security platforms are evolving to protect not just endpoints and networks, but the AI control plane itself.

Below are five of the most relevant Gen AI security solutions this year.

Check Point – GenAI Security

Check Point approaches Gen AI security as an extension of enterprise-wide threat prevention.

GenAI Protect focuses on monitoring prompt interactions across both sanctioned and unsanctioned generative AI applications. Instead of relying on regex-style pattern matching, it uses semantic analysis to classify the intent of prompts and enforce DLP policies in real time.

This matters because prompt injection and data leakage do not always contain obvious keywords. Context-aware enforcement is necessary when AI tools are integrated into IDEs, browsers, and internal systems.

ThreatCloud AI underpins the system with large-scale threat telemetry, allowing rapid propagation of compromise indicators across network, endpoint, and cloud layers.

For AI builders, the key differentiator is unified enforcement. The same platform that protects infrastructure can extend controls to AI usage without introducing a separate stack.

Best for: Enterprises wanting integrated, prompt security and AI infrastructure protection inside an existing security architecture.

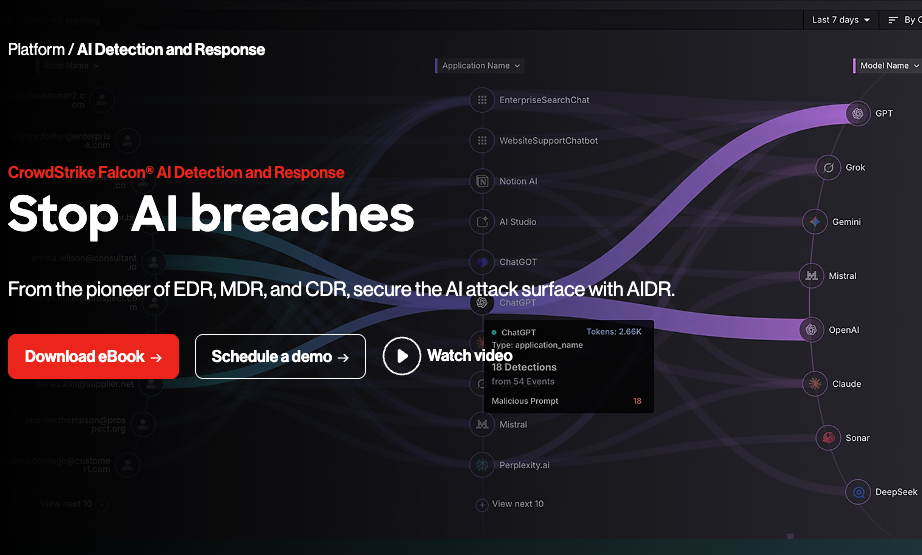

CrowdStrike – Falcon AI Detection & Response

CrowdStrike expands endpoint telemetry into the AI layer through Falcon AI Detection & Response.

The platform is designed to identify prompt injection techniques, agent misuse, and AI-driven anomaly patterns. Because Falcon already collects endpoint, identity, and cloud telemetry, AI signals are layered into an existing detection fabric.

Charlotte AI assists analysts with natural-language investigations, enabling security teams to query complex events conversationally. This aligns with how many teams now interact with AI systems internally.

For AutoGPT-style agent environments, endpoint and identity correlation becomes critical. Agents often operate through API tokens and service identities, which means agent compromise can propagate laterally across systems.

Best for: Organizations already using Falcon that want AI-layer detection integrated with endpoint and cloud signals.

Cisco – Secure AI Access

Cisco addresses AI security at the network and access layer.

Secure AI Access provides visibility into AI-related traffic flows, including background API calls, model endpoints, and agent communications. In distributed AI architectures, traffic-level inspection can reveal model misuse or data exfiltration attempts that endpoint tools miss.

The platform integrates into Cisco’s Security Service Edge stack, enforcing access controls and policy guardrails for AI usage. This is especially relevant in environments where AI agents connect to multiple external APIs.

For organizations building autonomous agents that call external services dynamically, controlling outbound AI traffic is as important as controlling inbound threats.

Best for: Enterprises wanting network-level AI visibility and access control across distributed systems.

Palo Alto Networks – Secure AI Access

Palo Alto Networks integrates AI security into its SASE and cloud-delivered security stack.

Secure AI Access focuses on inspecting and controlling AI-driven traffic between users, applications, and generative AI services. It enforces policy controls to mitigate data leakage, unauthorized AI tool usage, and API misuse.

For AI-native companies deploying agents in cloud-native architectures, SASE-based AI controls can provide centralized governance across remote workforces and distributed cloud workloads.

The integration into existing firewall and SASE layers simplifies policy management compared to deploying standalone AI security tools.

Best for: Organizations standardized on Palo Alto Networks looking to extend SASE policies to generative AI workflows.

IBM – AI Security Integration

IBM approaches Gen AI security through governance and integration.

Rather than focusing exclusively on prompt injection or agent control, IBM emphasizes embedding AI security into broader data protection and risk management processes. This includes model governance, data access control, and alignment with compliance.

For enterprises deploying AI internally at scale, governance becomes as critical as technical enforcement. Model lifecycle management, access review, and auditability are central themes.

IBM’s strength lies in integration into large, complex environments where AI initiatives intersect with regulatory requirements and data security frameworks.

Best for: Large enterprises needing AI security integrated into broader governance and compliance programs.

Comparison Summary

| Vendor | Primary Control Layer | Ideal Use Case |

| Check Point | Unified infrastructure + prompt security | Enterprises consolidating AI controls |

| CrowdStrike | Endpoint + AI detection | Falcon-centric environments |

| Cisco | Network-layer AI access control | API-heavy AI ecosystems |

| Palo Alto Networks | SASE-integrated AI governance | Distributed workforce AI usage |

| IBM | Governance and compliance integration | Regulated enterprises scaling AI |

How to Choose the Right Gen AI Security Solution

If you are building agents, focus on identity and API controls.

If you are concerned about prompt injection and employee use of AI, prioritize semantic DLP enforcement.

If you operate distributed systems with heavy API traffic, network-layer inspection may be critical.

Gen AI security is not a separate product category. It is an extension of identity, network, cloud, and data security into the AI control plane.

In 2026, the most secure AI deployments are not those with the most AI features, but those with integrated guardrails across every layer the agent touches.