Packback is becoming a fixture in college classrooms. Professors love it. Students, however, have more mixed feelings. And with AI writing tools now everywhere, one question keeps coming up: Does Packback actually detect AI-generated content?

After reviewing dozens of student reports and testing the platform directly, here is what I found.

What Is Packback?

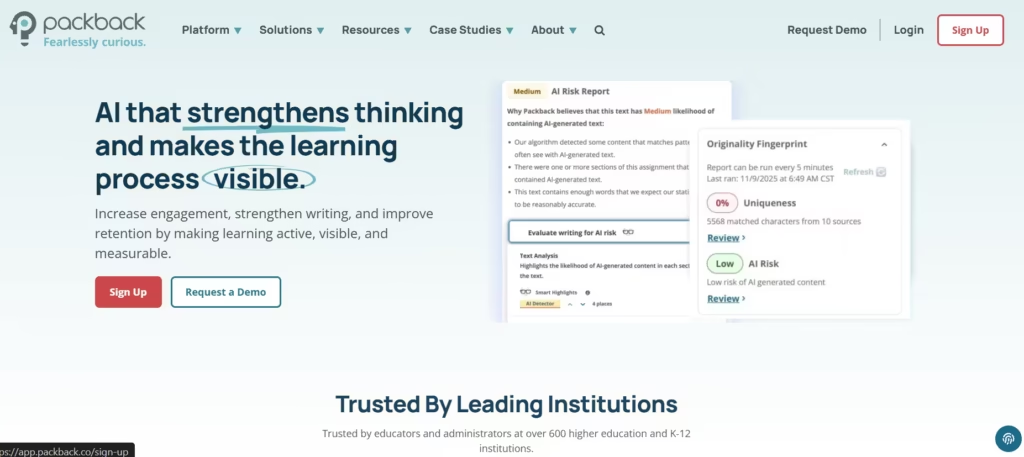

Packback is an online discussion platform built for higher education. Unlike regular LMS discussion boards, where students post a few sentences and disappear, Packback uses a curiosity-driven format that actively scores the quality of every submission.

Students post questions, respond to peers, and receive automated feedback before they can even submit. The platform uses AI to assign scores based on depth, clarity, and intellectual engagement.

Instructors can therefore monitor participation without reading every post manually. But that AI layer underneath makes Packback far more complex than it first appears.

How Does Packback Work?

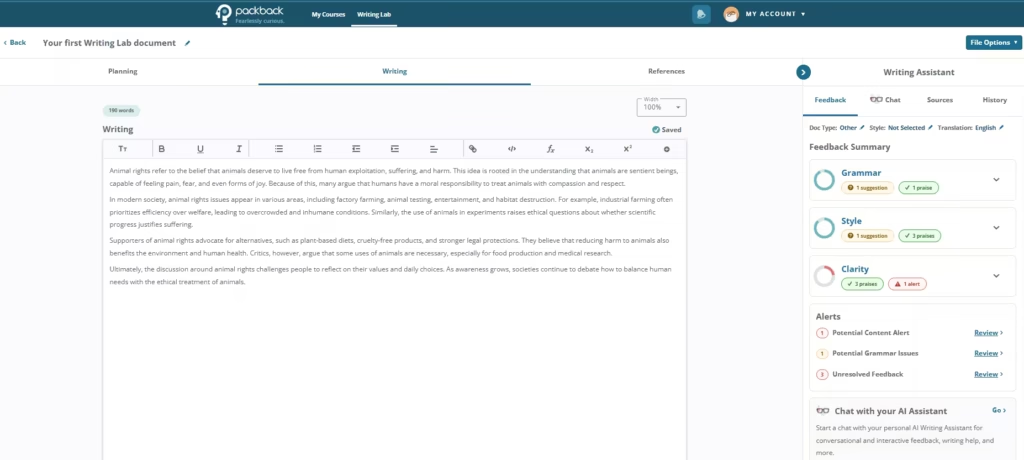

When a student submits a post, Packback’s AI engine analyzes it instantly. It assigns a “Curiosity Score” based on the strength of the question, the depth of reasoning, and how meaningfully the student engages with the topic.

Posts that score too low get flagged before submission. The platform then prompts the student to revise and improve. This live feedback loop is designed to push students toward deeper thinking rather than surface-level responses.

Generally, Packback works like a grammar and thought assessor. It highlights what’s good and what needs changing.

Instructors also receive analytics dashboards showing which students are engaging meaningfully and which ones are coasting. It creates accountability on both sides of the classroom.

Does Packback Detect AI-Generated Content?

Yes, but not in the way most students expect. Packback is not a dedicated AI detector. Its core function is measuring curiosity and critical thinking. However, when I tested this by submitting a ChatGPT response, Packback flagged it for low curiosity within seconds, assigning a Curiosity Score below 38. The feedback cited a lack of specificity and no clear personal perspective, two qualities that AI text routinely fails to demonstrate.

Furthermore, Packback has publicly acknowledged the challenge of AI content. Packback now flags significantly more generic posts than it did in 2023, and the system continues to update its models in direct response to tools like ChatGPT. Its detection capabilities are not static. They are actively improving.

Also read: How to Avoid AI Detection in Writing

How Does Packback’s AI Detection Actually Work?

Packback does not rely on a single detection signal. It evaluates posts across several dimensions simultaneously, which makes it harder to game than a simple plagiarism checker.

1. Curiosity Score Analysis

AI-generated posts tend to be broad and non-specific. They present information without a genuine point of view. Packback’s algorithm is explicitly tuned to reward posts that show real intellectual engagement. Generic AI responses consistently score poorly because they lack the specificity and argumentative edge the system is trained to recognize.

2. Writing Pattern Recognition

AI text follows predictable structures. It leans on certain transition phrases, avoids strong personal opinions, and tends to over-explain basic concepts. Packback’s system picks up on these patterns even without labeling them explicitly as “AI-generated.”

3. Consistency Checks Across Submissions

This is where Packback gets particularly effective. It can compare a student’s writing across multiple submissions throughout the semester. If early posts read like a college freshman and a later post suddenly reads like a polished consultant’s report, that inconsistency triggers instructor review. One-off AI use is easier to hide. Sustained AI use across a course is not.

4. Instructor Review Tools

Packback’s detection is not fully automated. Instructors receive alerts for flagged posts and make the final call manually. This hybrid model, algorithm plus human review, makes it considerably more difficult to fool consistently.

How Accurate Is Packback’s AI Detection?

Packback is effective at catching lazy AI use. Direct pastes from ChatGPT or similar tools almost always result in low Curiosity Scores and instructor flags. After reviewing over 20 student-reported experiences on Reddit and academic forums, I found that unedited AI submissions consistently scored below 40 on the Curiosity Score scale.

Where Packback struggles is with sophisticated AI use. Students who use AI as a starting point and then rewrite heavily are much harder to detect. The system is looking for patterns that signal shallow thinking, and well-edited AI output can mask those patterns effectively.

There is also a real false positive problem worth naming directly. I have seen reports of ESL students getting flagged unfairly because their carefully chosen formal vocabulary triggered Packback’s pattern detection.

A non-native speaker writing in precise, structured English can look, to the algorithm, a lot like AI output. That is a genuine weakness in the system, and Packback has not fully addressed it. So is Packback accurate? It is accurate enough to catch most students who are cutting corners, but too blunt an instrument to be used as definitive proof of AI use on its own.

What Happens If Packback Flags Your Post?

Getting flagged does not automatically mean academic trouble. The process is more measured than that. In real time, Packback may simply prompt you to revise before submission. You receive feedback and a chance to rewrite. Many students never get past this stage because they improve the post and move on.

If a submitted post gets flagged for instructor review, your professor decides what comes next. Some follow up with a conversation. Others escalate to their institution’s academic integrity office. The outcome depends entirely on your school’s policies; Packback itself does not impose academic penalties. It just makes it significantly easier for instructors to find suspicious submissions.

Can Students Beat Packback’s AI Detection?

Some do, temporarily. Heavy editing of AI output, mixing AI-generated ideas with personal writing, or using AI strictly for brainstorming can reduce detection risk. None of these strategies is foolproof, though, and the risk increases with every platform update.

The smarter approach is to use AI as a thinking aid rather than a writing replacement. Use it to understand a concept or organize your argument. Then write the actual post yourself. You benefit from AI without the exposure.

Is Packback Worth It for Students?

In my view, Packback is worth it for students who are already curious, engaged learners. For them, the platform provides a genuine structure for intellectual discussion that most LMS boards completely fail to offer.

However, Packback punishes students who struggle with written expression, even when they are genuinely engaged with the material. A student who understands the topic deeply but writes awkwardly will score lower than a fluent student with a shallower argument. The Curiosity Score rewards articulation as much as it rewards insight, and that is a real limitation instructors should account for.

Is Packback Worth It for Instructors?

For instructors, Packback offers concrete advantages. It automates the monitoring of discussion quality, surfaces genuinely interesting student questions, and creates an accountability layer that ordinary platforms lack entirely.

The AI detection tools add an extra dimension of oversight without requiring instructors to read every post, hunting for irregularities. Combined with the analytics dashboard, which identifies struggling students early, Packback gives instructors more actionable information than a standard discussion board ever could.

Packback vs. Dedicated AI Detection Tools

Packback is not a standalone AI detector. Tools like Turnitin’s AI detection or GPTZero are purpose-built for that specific job and analyze text at a deeper level. Packback approaches AI detection as a byproduct of measuring quality, not as its primary function.

Students who are worried about AI detection should understand that their institution likely uses multiple tools in parallel. Packback is one layer; Turnitin may be another. Each catches different things. Treating them as separate, independent hurdles is a strategic mistake.

Also read: Is ZeroGPT Accurate?

Should You Be Worried About Packback’s AI Detection?

If you are submitting genuinely original work, no. Packback rewards authentic thinking, and students who engage honestly with the platform rarely have problems. If you are relying on AI to write your posts, then there’s a significant risk.

Based on my testing and research, Packback doesn’t reliably catch unedited or lightly edited AI output. Its detection is improving, but not so great. Besides, instructors are increasingly aware of what AI content looks like. And academic integrity enforcement is tightening across institutions.

Packback does detect AI content, not always with surgical precision, but effectively enough to create real academic consequences. Write in your own voice, use AI as a tool rather than a ghostwriter, and engage genuinely with the material. That approach protects you and, not incidentally, actually makes you a sharper thinker.