Tensions are rising between Anthropic and the United States Department of Defense. The issue is centered on who controls how advanced AI is used in military operations.

Despite the strict deadline approaching, Anthropic’s CEO, Dario Amodei, has refused to grant unrestricted access to its AI systems.

Ethical Boundaries

Amodei stated that he “cannot in good conscience” approve the Pentagon’s request. He did not reject cooperation entirely.

Instead, he identified two key risks: mass surveillance of civilians and fully autonomous weapons without human oversight.

Mass surveillance could threaten civil liberties. Autonomous weapons incite serious safety threats if autonomous AI doesn’t align with human survival.

In both cases, the consequences are hard to control. Moreover, Amodei stressed that current AI systems are not reliable enough for such critical roles.

Therefore, expanding their use without safeguards could lead to harm.

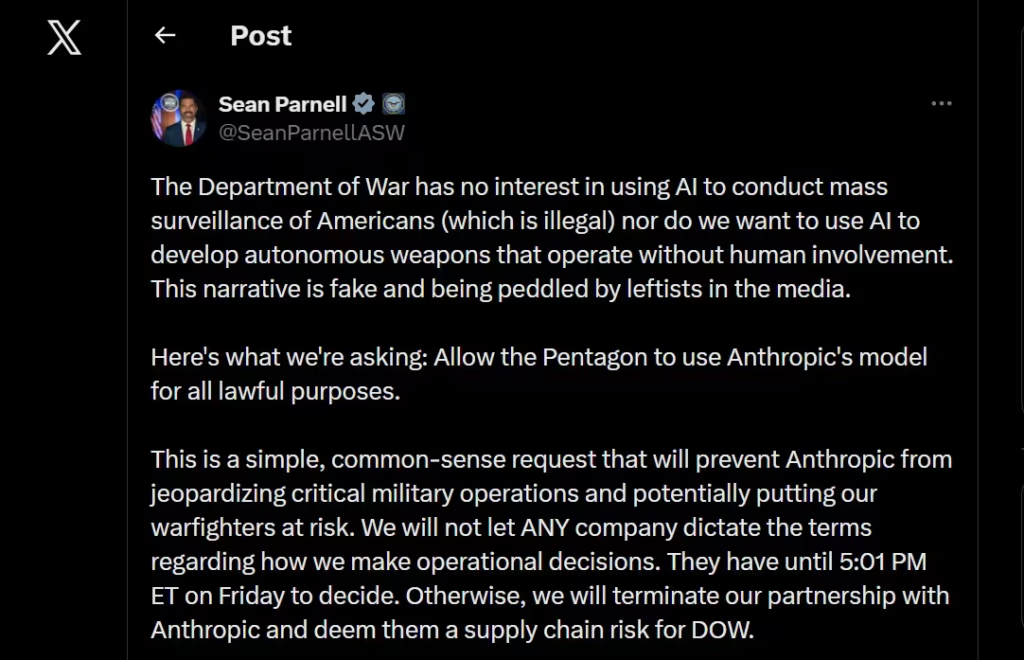

Pentagon’s Defense

In contrast, the United States Department of Defense holds a different view. It argues that it should use AI for any lawful purpose.

From its perspective, national security comes first. As a result, limiting access could slow progress and weaken military readiness.

Officials believe private companies should not set boundaries on defense strategy. Instead, those decisions should remain within government control.

Legal Pressure

To enforce its position, the Pentagon has considered strong measures that include labeling Anthropic as a supply chain risk and invoking the Defense Production Act.

The “supply chain risk” label is usually reserved for foreign threats, and applying it to a domestic firm would be highly unusual.

Meanwhile, the Defense Production Act allows the government to direct companies to support national defense. In practice, this could force compliance.

Amodei highlighted the contradiction. One option labels Anthropic as a risk, and the other labels its AI as essential.

Despite mounting pressure, Anthropic has remained composed. Amodei emphasized a willingness to continue working with the military, but he insisted on safeguards.

These safeguards aim to ensure human control and prevent misuse.

At the same time, he acknowledged another path. If necessary, Anthropic is prepared to step away and provide a smooth transition to another provider.

This will reduce operational disruption.